“Well, Congressman, I view our responsibility as not just building services that people like to use, but making sure that those services are also good for people and good for society overall.” — Mark Zuckerberg, 2018

In the previous two posts in this series, I did a long but briskly paced early history of Meta and the internet in Myanmar—and the hateful and dehumanizing speech that came with it—and then looked at what an outside-the-company view could reveal about Meta’s role in the genocide of the Rohingya in 2016 and 2017.

In this post, I’ll look at what two whistleblowers and a crucial newspaper investigation reveal about what was happening inside Meta at the time. Specifically, the disclosed information:

- gives us a quantitative view of Meta’s content moderation performance—which, in turn, highlights a deceptive PR move routine Meta uses when questioned about moderation;

- clarifies what Meta knew about the effects of its algorithmic recommendations systems; and

- reveals a parasitic takeover of the Facebook platform by covert influence campaigns around the world—including in Myanmar.

Before we get into that, a brief personal note. There are few ways to be in the world that I enjoy less than “breathless conspiratorial.” That rhetorical mode muddies the water when people most need clarity and generates an emotional charge that works against effective decision-making. I really don’t like it. So it’s been unnerving to synthesize a lot of mostly public information and come up with results that wouldn’t look completely out of place in one of those overwrought threads.

I don’t know what to do with that except to be forthright but not dramatic, and to treat my readers’ endocrine systems with respect by avoiding needless flourishes. But the story is just rough and many people in it do bad things. (You can read my meta-post about terminology and sourcing if you want to see me agonize over the minutiae.)

Content warnings for the post: The whole series is about genocide and hate speech. There are no graphic descriptions or images, and this post includes no slurs or specific examples of hateful and inciting messages, but still. (And there’s a fairly unpleasant photograph of a spider at about the 40% mark.)

The disclosures

When Frances Haugen, a former product manager on Meta’s Civic Integrity team, disclosed a ton of internal Meta docs to the SEC—and several media outlets—in 2021, I didn’t really pay attention. I was pandemic-tired and I didn’t think there’d be much in there that I didn’t know. I was wrong!

Frances Haugen’s disclosures are of generational importance, especially if you’re willing to dig down past the US-centric headlines. Haugen has stated that she came forward because of things outside the US—Myanmar and its horrific echo years later in Ethiopia, specifically, and the likelihood that it would all just keep happening. So it makes sense that the docs she disclosed would be highly relevant, which they are.

There are eight disclosures in the bundle of information Haugen delivered via lawyers to the SEC, and each is about one specific way Meta “misled investors and the public.” Each disclosure takes the form of a letter (which probably has a special legal name I don’t know) and a huge stack of primary documents. The majority of those documents—internal posts, memos, emails, comments—haven’t yet been made public, but the letters themselves include excerpts, and subsequent media coverage and straightforward doc dumps have revealed a little bit more. When I cite the disclosures, I’ll point to the place where you can read the longest chunk of primary text—often that’s just the little excerpts in the letters, but sometimes we have a whole—albeit redacted—document to look at.

In the fourth post in the series, I’ll say a little about what the documents reveal about Meta’s culture. Here, I’ll just note that many people inside Meta clearly tried to make things better. How well that worked is another question.

Before continuing, I think it’s only fair to note that the disclosures we see in public are necessarily those that run counter to Meta’s public statements, because otherwise there would be no need to disclose them. And because we’re only getting excerpts, there’s obviously a ton of context missing—including, presumably, dissenting internal views. I’m not interested in making a handwavey case based on one or two people inside a company making wild statements. So I’m only emphasizing points that are supported in multiple, specific excerpts.

Let’s start with content moderation and what the disclosures have to say about it.

How much dangerous stuff gets taken down?

We don’t know how much “objectionable content” is actually on Facebook—or on Instagram, or Twitter, or any other big platform. The companies running those platforms don’t know the exact numbers either, but what they do have are reasonably accurate estimates. We know they have estimates because sampling and human-powered data classification is how you train the AI classifiers required to do content-based moderation—removing posts and comments—at mass scale. And that process necessarily lets you estimate from your samples roughly how much of a given kind of problem you’re seeing. (This is pretty common knowledge, but it’s also confirmed in an internal doc I quote below.)

The platforms aren’t sharing those estimates with us because no one’s forcing them to. And probably also because, based on what we’ve seen from the disclosures, the numbers are quite bad. So I want to look at how bad they are, or recently were, on Facebook. Alongside that, I want to point out the most common way Meta distracts reporters and governing bodies from its terrible stats, because I think it’s a very useful thing to be able to spot.

One of Frances Haugen’s SEC disclosure letters is about Meta’s failures to moderate hate speech. It’s helpfully titled, “Facebook misled investors and the public about ‘transparency’ reports boasting proactive removal of over 90% of identified hate speech when internal records show that ‘as little as 3-5% of hate’ speech is actually removed.”1

Here’s the excerpt from the internal Meta document from which that “3–5%” figure is drawn:

…we’re deleting less than 5% of all of the hate speech posted to Facebook. This is actually an optimistic estimate—previous (and more rigorous) iterations of this estimation exercise have put it closer to 3%, and on V&I [violence and incitement] we’re deleting somewhere around 0.6%…we miss 95% of violating hate speech.2

Here’s another quote from different memo excerpted in the same disclosure letter:

[W]e do not … have a model that captures even a majority of integrity harms, particularly in sensitive areas … We only take action against approximately 2% of the hate speech on the platform. Recent estimates suggest that unless there is a major change in strategy, it will be very difficult to improve this beyond 10-20% in the short-medium term.3

Another estimate from a third internal document:

We seem to be having a small impact in many language-country pairs on Hate Speech and Borderline Hate, probably ~3% … We are likely having little (if any) impact on violence.4

Here’s a fourth one, specific to a study about Facebook in Afghanistan, which I include to help contextualize the global numbers:

While Hate Speech is consistently ranked as one of the top abuse categories in the Afghanistan market, the action rate for Hate Speech is worryingly low at 0.23 per cent.5

I don’t think these figures need a ton of commentary, honestly. I would agree that removing less than a quarter of a percent of hate speech is indeed “worryingly low,” as is removing 0.6% of violence and incitement messages. I think removing even 5% of hate speech—the highest number cited in the disclosures—is objectively terrible performance, and I think most people outside of the tech industry would agree with that. Which is presumably why Meta has put a ton of work into muddying the waters around content moderation.

So back to that SEC letter with the long name. It points something out, which is that Meta has long claimed that Facebook “proactively” detects between 95% (in 2020, globally) and 98% (in Myanmar, in 2021) of all the posts it removes because they’re hate speech—before users even see them.

At a glance, this looks good. Ninety-five percent is a lot! But since we know from the disclosed material that based on internal estimates the takedown rates for hate speech are at or below 5%, what’s going on here?

Here’s what Meta is actually saying: Sure, they might identify and remove only a tiny fraction of dangerous and hateful speech on Facebook, but of that tiny fraction, their AI classifiers catch about 95–98% before users report it. That’s literally the whole game, here.

So…the most generous number from the disclosed memos has Meta removing 5% of hate speech on Facebook. That would mean that for every 2,000 hateful posts or comments, Meta removes about 100–95 automatically and 5 via user reports. In this example, 1,900 of the original 2,000 messages remain up and circulating. So based on the generous 5% removal rate, their AI systems nailed…4.75% of hate speech. That’s the level of performance they’re bragging about.

You don’t need to take my word for any of this—Wired ran a critique breaking it down in 2021 and Ranking Digital Rights has a strongly worded post about what Meta claims in public vs. what the leaked documents reveal to be true, including this content moderation math runaround.

Meta does this particular routine all the time.

The shell game

Here’s Mark Zuckerberg on April 10th, 2018, answering a question in front of the Senate’s Commerce and Judiciary committees. He says that hate speech is really hard to find automatically and then pivots to something that he says is a real success, which is “terrorist propaganda,” which he simplifies immediately to “ISIS and Al Qaida content.” But that stuff? No problem:

Contrast [hate speech], for example, with an area like finding terrorist propaganda, which we’ve actually been very successful at deploying A.I. tools on already. Today, as we sit here, 99 percent of the ISIS and Al Qaida content that we take down on Facebook, our A.I. systems flag before any human sees it. So that’s a success in terms of rolling out A.I. tools that can proactively police and enforce safety across the community.6

So that’s 99% of…the unknown percentage of this kind of content that’s actually removed.

Zuckerberg actually tries to do the same thing the next day, April 11th, before the House Energy and Commerce Committee, but he whiffs the maneuver:

…we’re getting good in certain areas. One of the areas that I mentioned earlier was terrorist content, for example, where we now have A.I. systems that can identify and—and take down 99 percent of the al-Qaeda and ISIS-related content in our system before someone—a human even flags it to us. I think we need to do more of that.7

The version Zuckerberg says right there, on April 11th, is what I’m pretty sure most people think Meta means when they go into this stuff—but as stated, it’s a lie.

No one in those hearings presses Zuckerberg on those numbers—and when Meta repeats the move in 2020, plenty of reporters fall into the trap and make untrue claims favorable to Meta:

…between its AI systems and its human content moderators, Facebook says it’s detecting and removing 95% of hate content before anyone sees it. —Fast Company

About 95 percent of hate speech on Facebook gets caught by algorithms before anyone can report it… —Ars Technica

Facebook said it took action on 22.1 million pieces of hate speech content to its platform globally last quarter and about 6.5 million pieces of hate speech content on Instagram. On both platforms, it says about 95% of that hate speech was proactively identified and stopped by artificial intelligence. —Axios

The company said it now finds and eliminates about 95% of the hate speech violations using automated software systems before a user ever reports them… —Bloomberg

This is all not just wrong but wildly wrong if you have the internal numbers in front of you.

I’m hitting this point so hard not because I want to point out ~corporate hypocrisy~ or whatever, but because this deceptive runaround is consequential for two reasons: The first is that it provides instructive context about how to interpret Meta’s public statements. The second is that it actually says extremely dire things about Meta’s only hope for content-based moderation at scale, which is their AI-based classifiers.

Here’s Zuckerberg saying as much to a congressional committee:

…one thing that I think is important to understand overall is just the sheer volume of content on Facebook makes it so that we can’t—no amount of people that we can hire will be enough to review all of the content.… We need to rely on and build sophisticated A.I. tools that can help us flag certain content.8

This statement is kinda disingenuous in a couple of ways, but the central point is true: the scale of these platforms makes human review incredibly difficult. And Meta’s reasonable-sounding explanation is that this means they have to focus on AI. But by their own internal estimates, Meta’s AI classifiers are only identifying something in the range of 4.75% of hate speech on Facebook, and often considerably less. That seems like a dire stat for the thing you’re putting forward to Congress as your best hope!

The same disclosed internal memo that told us Meta was deleting between 3% and 5% of hate speech had this to say about the potential of AI classifiers to handle mass-scale content removals:

[O]ur current approach of grabbing a hundred thousand pieces of content, paying people to label them as Hate or Not Hate, training a classifier, and using it to automatically delete content at 95% precision is just never going to make much of a dent.9

Getting content moderation to work for even extreme and widely reviled categories of speech is obviously genuinely difficult, so I want to be extra clear about a foundational piece of my argument.

Responsibility for the machine

I think that if you make a machine and hand it out for free to everyone in the world, you’re at least partially responsible for the harm that the machine does.

“It’s very difficult” perhaps doesn’t carry as much weight when you’re pulling in $40 billion in annual profits, as Meta did in 2021. Is it forty billion dollars difficult?

Also, even if you say, “but it’s very difficult to make the machine safer!” I don’t think that reduces your responsibility so much as it makes you look shortsighted and bad at machines.

Beyond the bare fact of difficulty, though, I think the more what harm the machine does deviates from what people might expect a machine that looks like this to do, the more responsibility you bear: If you offer everyone in the world a grenade, I think that’s bad, but also it won’t be surprising when people who take the grenade get hurt or hurt someone else. But when you offer everyone a cute little robot assistant that turns out to be easily repurposed as a rocket launcher, I think that falls into another category.

Especially if you see that people are using your cute little robot assistant to murder thousands of people and elect not to disarm it because that would make it a little less cute.

This brings us to the algorithms.

“Core product mechanics”

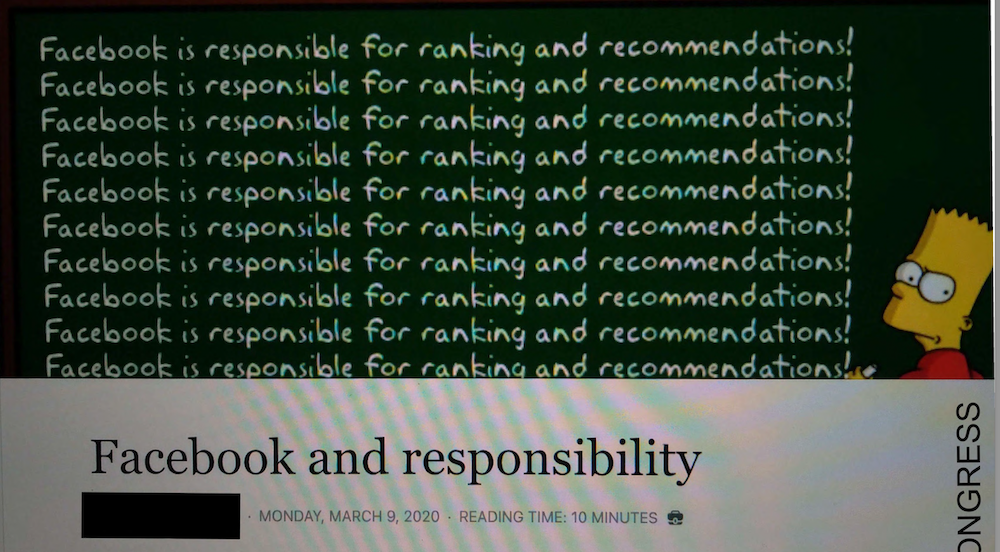

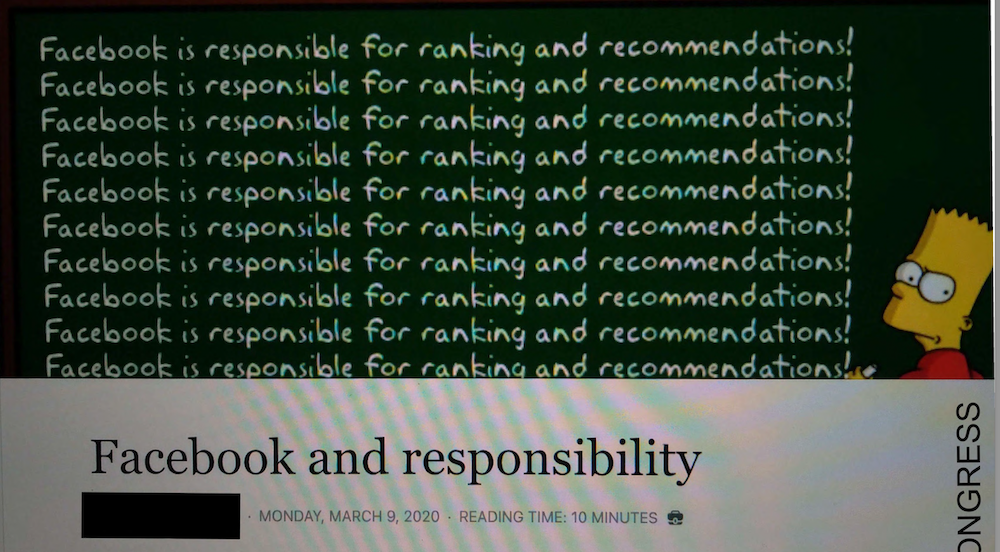

Screencap of an internal Meta document titled Facebook and responsibility, with a header image of the Bart Simson writing on the chalkboard meme in which the writing on the board reads, Facebook is responsible for ranking and recommendations!

From a screencapped version of “Facebook and responsibility,” one of the disclosed internal documents.

In the second post in this series, I quoted people in Myanmar who were trying to cope with an overwhelming flood of hateful and violence-inciting messages. It felt obvious on the ground that the worst, most dangerous posts were getting the most juice.

Thanks to the Haugen disclosures, we can confirm that this was also understood inside Meta.

The disclosed documents largely come from the years shortly after after the peak of the genocide of the Rohingya in Myanmar. They’re almost all retrospective, so I think they’re highly applicable to the period I’ve been looking at.

In 2019, a Meta employee wrote a memo called “What is Collateral damage.” It included these statements (my emphasis):

“We have evidence from a variety of sources that hate speech, divisive political speech, and misinformation on Facebook and the family of apps are affecting societies around the world. We also have compelling evidence that our core product mechanics, such as virality, recommendations, and optimizing for engagement, are a significant part of why these types of speech flourish on the platform.

If integrity takes a hands-off stance for these problems, whether for technical (precision) or philosophical reasons, then the net result is that Facebook, taken as a whole, will be actively (if not necessarily consciously) promoting these types of activities. The mechanics of our platform are not neutral. 10

If you work in tech or if you’ve been following mainstream press accounts about Meta over the years, you presumably already know this, but I think it’s useful to establish this piece of the internal conversation.

Here’s a long breakdown from 2020 about the specific parts of the platform that actively put “unconnected content”—messages that aren’t from friends or Groups people subscribe to—in front of Facebook users. It comes from an internal post called “Facebook and responsibility” (my emphasis):

Facebook is most active in delivering content to users on recommendation surfaces like “Pages you may like,” “Groups you should join,” and suggested videos on Watch. These are surfaces where Facebook delivers unconnected content. Users don’t opt-in to these experiences by following other users or Pages. Instead, Facebook is actively presenting these experiences…

News Feed ranking is another way Facebook becomes actively involved in these harmful experiences. Of course users also play an active role in determining the content they are connected to through feed, by choosing who to friend and follow. Still, when and whether a user sees a piece of content is also partly determined by the ranking scores our algorithms assign, which are ultimately under our control. This means, according to ethicists, Facebook is always at least partially responsible for any harmful experiences on News Feed.

This doesn’t owe to any flaw with our News Feed ranking system, it’s just inherent to the process of ranking. To rank items in Feed, we assign scores to all the content available to a user and then present the highest-scoring content first. Most feed ranking scores are determined by relevance models. If the content is determined to be an integrity harm, the score is also determined by some additional ranking machinery to demote it lower than it would have appeared given its score. Crucially, all of these algorithms produce a single score; a score Facebook assigns. Thus, there is no such thing as inaction on Feed. We can only choose to take different kinds of actions.11

The next few quotes will apply directly to US concerns, but they’re clearly broadly applicable to the 90% of Facebook users who are outside the US and Canada, and whose disinfo concerns receive vastly fewer resources.

This one is from an internal Meta doc from November 5, 2020:

Not only do we not do something about combustible election misinformation in comments, we amplify them and give them broader distribution.12

When Meta staff tried to take the measure of their own recommendation systems’ behavior, they found that the systems led a fresh, newly made account into disinfo-infested waters very quickly:

After a small number of high quality/verified conservative interest follows… within just one day Page recommendations had already devolved towards polarizing content.

Although the account set out to follow conservative political news and humor content generally, and began by following verified/high quality conservative pages, Page recommendations began to include conspiracy recommendations after only 2 days (it took <1 week to get a QAnon recommendation!)

Group recommendations were slightly slower to follow suit - it took 1 week for in-feed GYSJ recommendations to become fully political/right-leaning, and just over 1 week to begin receiving conspiracy recommendations.13

The same document reveals that several of the Pages and Groups Facebook’s systems recommend to its test user show multiple signs of association with “coordinated inauthentic behavior,” aka foreign and domestic covert influence campaigns, which we’ll get to very soon.

Before that, I want to offer just one example of algorithmic malpractice from Myanmar.

Flower speech

Panzagar campaign illustration depicting a Burmese girl with thanaka on her cheek and illustrated flowers coming from her mouth, flowing toward speech bubbles labeled with the names of Burmese towns and cities.

Panzagar campaign illustration depicting a Burmese girl with thanaka on her cheek and illustrated flowers coming from her mouth, flowing toward speech bubbles labeled with the names of Burmese towns and cities.

Back in 2014, Burmese organizations including MIDO and Yangon-based tech accelerator Phandeeyar collaborated on a carefully calibrated counter-speech project called Panzagar (flower speech). The campaign—which was designed to be delivered in person, in printed materials, and online—encouraged ordinary Burmese citizens to push back on hate speech in Myanmar.

Later that year, Meta, which had just been implicated in the deadly communal violence in Mandalay, joined with the Burmese orgs to turn their imagery into digital Facebook stickers that users could apply to posts calling for things like the annihilation of the Rohingya people. The stickers depict cute cartoon characters, several of which offer admonishments like, “Don’t be the source of a fire,” “Think before you share,” “Don’t you be spawning hate,” and “Let it go buddy!”

The campaign was widely and approvingly covered by western organizations and media outlets, and Meta got a lot of praise for its involvement.

But according to members of the Burmese civil society coalition behind the campaign, it turned out that the Panzagar Facebook stickers—which were explicitly designed as counterspeech—“carried significant weight in their distribution algorithm,” so anyone who used them to counter hateful and violent messages inadvertently helped those messages gain wider distribution.14

I mention the Panzagar incident not only because it’s such a head-smacking example of Meta favoring cosmetic, PR-friendly tweaks over meaningful redress, or because it reveals plain incompetence in the face of already-serious violence, but also because it gets to what I see as a genuinely foundational problem with Meta in Myanmar.

Even when the company was finally (repeatedly) forced to take notice of the dangers it was contributing to, actions that could actually have made a difference—like rolling out new programs only after local consultation and adaptation, scaling up culturally and linguistically competent human moderation teams in tandem with increasing uptake, and above all, altering the design of the product to stop amplifying the most charged messages—remained not just undone, but unthinkable because they were outside the company’s understanding of what the product’s design should take into consideration.

This refusal to connect core project design with accelerating global safety problems means that attempts at prevention and repair are relegated to window-dressing—or which are actually counterproductive, as in the case of the Panzagar stickers, which absorbed the energy and efforts of local Burmese civil society groups and turned them into something that made the situation worse.

In a 2018 interview with Frontline about problems with Facebook, Meta’s former Chief Security Officer, Alex Stamos, returns again and again to the idea that security work properly happens at the product design level. Toward the end of the interview, he gets very clear:

Stamos: I think there was a structural problem here in that the people who were dealing with the downsides were all working together over kind of in the corner, right, so you had the safety and security teams, tight-knit teams that deal with all the bad outcomes, and we didn’t really have a relationship with the people who are actually designing the product.

Interviewer: You did not have a relationship?

Stamos: Not like we should have, right? It became clear—one of the things that became very clear after the election was that the problems that we knew about and were dealing with before were not making it back into how these products are designed and implemented.15

Meta’s content moderation was a disaster in Myanmar—and around the world—not only because it was treated and staffed like an afterthought, but because it was competing against Facebook’s core machinery.

And just as the house always wins, the core machinery of a mass-scale product built to boost engagement always defeats retroactive and peripheral attempts at cleanup.

This is especially true once organized commercial and nation-state actors figured out how to take over that machinery with large-scale fake Page networks boosted by fake engagement, which brings us to a less-discussed revelation: By the mid-2010s, Facebook had effectively become the equivalent of botnet in the hands of any group, governmental or commercial, who could summon the will and resources to exploit it.

A lot of people did, including, predictably, some of the worst people in the world.

Meta’s zombie networks

Ophiocordyceps formicarum observed at the Mushroom Research Centre, Chiang Mai, Thailand; Steve Axford (CC BY-SA 3.0)

Content warning: The NYT article I link to below is important, but it includes photographs of mishandled bodies, including those of children. If you prefer not to see those, a “reader view” or equivalent may remove the images. (Sarah Sentilles’ 2018 article on which kinds of bodies US newspapers put on display may be of interest.)

In 2018, the New York Times published a front-page account of what really happened on Facebook in Myanmar, which is that beginning around 2013, Myanmar’s military, the Tatmadaw, set up a dedicated, ultra-secret anti-Rohingya hatefarm spread across military bases in which up to 700 staffers worked in shifts to manufacture the appearance of overwhelming support for the genocide the same military then carried out.16

When the NYT did their investigation in 2018, all those fake Pages were still up.

Here’s how it worked: First, the military set up a sprawling network of fake accounts and Pages on Facebook. The fake accounts and Pages focused on innocuous subjects like beauty, entertainment, and humor. These Pages were called things like, “Beauty and Classic,” “Down for Anything,” “You Female Teachers,” “We Love Myanmar,” and “Let’s Laugh Casually.” Then military staffers, some trained by Russian propaganda specialists, spent years tending the Pages and gradually building up followers.17

Then, using this array of long-nurtured fake Pages—and Groups, and accounts—the Tatmadaw’s propagandists used everything they’d learned about Facebook’s algorithms to post and boost viral messages that cast Rohingya people as part of a global Islamic threat, and as the perpetrators of a never-ending stream of atrocities. The Times reports:

Troll accounts run by the military helped spread the content, shout down critics and fuel arguments between commenters to rile people up. Often, they posted sham photos of corpses that they said were evidence of Rohingya-perpetrated massacres…18

That the Tatmadaw was capable of such a sophisticated operation shouldn’t have come as a surprise. Longtime Myanmar digital rights and technology researcher Victoire Rio notes that the Tatmadaw had been openly sending its officers to study in Russia since 2001, was “among the first adopters of the Facebook platform in Myanmar” and launched “a dedicated curriculum as part of its Defense Service Academy Information Warfare training.”19

What these messages did

I don’t have the access required to sort out which specific messages originated from extremist religious networks vs. which were produced by military operations, but I’ve seen a lot of the posts and comments central to these overlapping campaigns in the UN documents and human rights reports.

They do some very specific things:

- They dehumanize the Rohingya: The Facebook messages speak of the Rohingya as invasive species that outbreed Buddhists and Myanmar’s real ethnic groups. There are a lot of bestiality images.

- They present the Rohingya as inhumane, as sexual predators, and as an immediate threat: There are a lot of graphic photos of mangled bodies from around the world, most of them presented as Buddhist victims of Muslim killers—usually Rohingya. There are a lot of posts about Rohingya men raping, forcibly marrying, beating, and murdering Buddhist women. One post that got passed around a lot includes a graphic photo of a woman tortured and murdered by a Mexican cartel, presented as a Buddhist woman in Myanmar murdered by the Rohingya.

- They connect the Rohingya to the “global Islamic threat”: There’s a lot of equating Rohingya people with ISIS terrorists and assigning them group responsibility for real attacks and atrocities by distant Islamic terror organizations.

Ultimately, all of these moves flow into demands for violence. The messages call incessantly and graphically for mass killings, beatings, and forced deportations. They call not for punishment, but annihilation.

This is, literally, textbook preparation for genocide, and I want to take a moment to look at how it works.

Helen Fein is the author of several definitive books on genocide, a co-founder and first president of the International Association of Genocide Scholars, and the founder of the Institute for the Study of Genocide. I think her description of the ways genocidaires legitimize their attacks holds up extremely well despite having been published 30 years ago. Here, she classifies a specific kind of rhetoric as one of the defining characteristics of genocide:

Is there evidence of an ideology, myth, or an articulated social goal which enjoins or justifies the destruction of the victim? Besides the above, observe religious traditions of contempt and collective defamation, stereotypes, and derogatory metaphor indicating the victim is inferior, subhuman (animals, insects, germs, viruses) or superhuman (Satanic, omnipotent), or other signs that the victims were pre-defined as alien, outside the universe of obligation of the perpetrator, subhuman or dehumanized, or the enemy—i.e., the victim needs to be eliminated in order that we may live (Them or Us).20

It’s also necessary for genocidaires to make claims—often supported by manufactured evidence—that the targeted group itself is the true danger, often by projecting genocidal intent onto the group that will be attacked.

Adam Jones, the guy who wrote a widely used textbook on genocide, puts it this way:

One justifies genocidal designs by imputing such designs to perceived opponents. The Tutsis/ Croatians/Jews/Bolsheviks must be killed because they harbor intentions to kill us, and will do so if they are not stopped/prevented/annihilated. Before they are killed, they are brutalized, debased, and dehumanized—turning them into something approaching “subhumans” or “animals” and, by a circular logic, justifying their extermination.21

So before their annihilation, the target group is presented as outcast, subhuman, vermin, but also themselves genocidal—a mortal threat. And afterward, the extraordinary cruelties characteristic of genocide reassure those committing the atrocities that their victims aren’t actually people.

The Tatmadaw committed atrocities in Myanmar. I touched on them in Part II and I’m not going to detail them here. But the figuratively dehumanizing rhetoric I described in parts one and two can’t be separated from the literally dehumanizing things the Tatmadaw did to the humans they maimed and traumatized and killed. Especially now that it’s clear that the military was behind much of the rhetoric as well as the violent actions that rhetoric worked to justify.

In some cases, even the methods match up: The military’s campaign of intense and systematic sexual violence toward and mutilation of women and girls, combined with the concurrent mass murder of children and babies, feels inextricably connected to the rhetoric that cast the Rohingya as both a sexual and reproductive threat who endanger the safety of Buddhist women and outbreed the ethnicities that belong in Myanmar.

Genocidal communications are an inextricable part of a system that turns “ethnic tensions” into mass death. When we see that the Tatmadaw was literally the operator of covert hate and dehumanization propaganda networks on Facebook, I think the most rational way to understand those networks is as an integral part of the genocidal campaign.

After the New York Times article went live, Meta did two big takedowns. Nearly four million people were following the fake Pages identified by either the NYT or by Meta in follow-up investigations. (Meta had previously removed the Tatmadaw’s own official Pages and accounts and 46 “news and opinion” Pages that turned out to be covertly operated by the military—those Pages were followed by nearly 12 million people.)

So given these revelations and disclosures, here’s my question: Does the deliberate, adversarial use of Facebook by Myanmar’s military as a platform for disinformation and propaganda take any of the heat off of Meta? After all, a sovereign country’s military is a significant adversary.

But here’s the thing—Alex Stamos, Facebook’s Chief Security Officer, had been trying since 2016 to get Meta’s management and executives to acknowledge and meaningfully address the fact that Facebook was being used as host for both commercial and state-sponsored covert influence ops around the world. Including in the only place where it was likely to get the company into really hot water: the United States.

“Oh fuck”

On December 16, 2016, Facebook’s newish Chief Security Officer, Alex Stamos—who now runs Stanford’s Internet Observatory—rang Meta’s biggest alarm bells by calling an emergency meeting with Mark Zuckerberg and other top-level Meta executives.

In that meeting, documented in Sheera Frenkel and Cecilia Kang’s book, The Ugly Truth, Stamos handed out a summary outlining the Russian capabilities. It read:

We assess with moderate to high confidence that Russian state-sponsored actors are using Facebook in an attempt to influence the broader political discourse via the deliberate spread of questionable news articles, the spread of information from data breaches intended to discredit, and actively engaging with journalists to spread said stolen information.22

“Oh fuck, how did we miss this?” Zuckerberg responded.

This whole section draws information from Frenkel and Kang’s reporting in An Ugly Truth. For context, they’re both New York Times reporters—Frenkel previously covered Facebook and other tech companies for BuzzFeed News, and Kang did the same at the Washington Post.

Stamos’ team had also uncovered “a huge network of false news sites on Facebook” posting and cross-promoting sensationalist bullshit, much of it political disinformation, along with examples of governmental propaganda operations from Indonesia, Turkey, and other nation-state actors. And the team had recommendations on what to do about it.

Frenkel and Kang paraphrase Stamos’ message to Zuckerberg (my emphasis):

Facebook needed to go on the offensive. It should no longer merely monitor and analyze cyber operations; the company had to gear up for battle. But to do so required a radical change in culture and structure. Russia’s incursions were missed because departments across Facebook hadn’t communicated and because no one had taken the time to think like Vladimir Putin.23

Those changes in culture and structure didn’t happen. Stamos began to realize that to Meta’s executives, his work uncovering the foreign influence networks, and his choice to bring them to the executives’ attention, were both unwelcome and deeply inconvenient.

All through the spring and summer of 2017, instead of retooling to fight the massive international category of abuse Stamos and his colleagues had uncovered, Facebook played hot potato with the information about the ops Russia had already run.

On September 21, 2017, while the Tatmadaw’s genocidal “clearance operations” were approaching their completion, Mark Zuckerberg finally spoke publicly about the Russian influence campaign for the first time.24

In the intervening months, the massive covert influence networks operating in Myanmar ground along, unnoticed.

Thanks to Sophie Zhang, a data scientist who spent two years at Facebook fighting to get networks like the Tatmadaw’s removed, we know quite a lot about why.

What Sophie Zhang found

In 2018, Facebook hired a data scientist named Sophie Zhang and assigned her to a new team working on fake engagement—and specifically on “scripted inauthentic activity,” or bot-driven fake likes and shares.

Within her first year on the team, Zhang began finding examples of bot-driven engagement being used for political messages in both Brazil and India ahead of their national elections. Then she found something that concerned her a lot more. Karen Hao of the MIT Technology Review writes:

The administrator for the Facebook page of the Honduran president, Juan Orlando Hernández, had created hundreds of pages with fake names and profile pictures to look just like users—and was using them to flood the president’s posts with likes, comments, and shares. (Facebook bars users from making multiple profiles but doesn’t apply the same restriction to pages, which are usually meant for businesses and public figures.)

The activity didn’t count as scripted, but the effect was the same. Not only could it mislead the casual observer into believing Hernández was more well-liked and popular than he was, but it was also boosting his posts higher up in people’s newsfeeds. For a politician whose 2017 reelection victory was widely believed to be fraudulent, the brazenness—and implications—were alarming.25

When Zhang brought her discovery back to the teams working on Pages Integrity and News Feed Integrity, both refused to act, either to stop fake Pages from being created, or to keep the fake engagement signals the fake Pages generate from making posts go viral.

But Zhang kept at it, and after a year, Meta finally removed the Honduran network. The very next day, Zhang reported a network of fake Pages in Albania. The Guardian’s Julia Carrie Wong explains what came next:

In August, she discovered and filed escalations for suspicious networks in Azerbaijan, Mexico, Argentina and Italy. Throughout the autumn and winter she added networks in the Philippines, Afghanistan, South Korea, Bolivia, Ecuador, Iraq, Tunisia, Turkey, Taiwan, Paraguay, El Salvador, India, the Dominican Republic, Indonesia, Ukraine, Poland and Mongolia.26

For a much more recent example of Meta refusing to remove fake-Page networks and coordinated influence campaigns connected to high-profile accounts, see this September 27, 2023 Washington Post article on how Meta knowingly let covert coordinated influence campaigns run loose on Facebook because they were run by the Indian army.

According to Zhang, Meta eventually established a policy against “inauthentic behavior,” but didn’t enforce it, and rejected Zhang’s proposal to punish repeat fake-Page creators by banning their personal accounts because of policy staff’s “discomfort with taking action against people connected to high-profile accounts.”27

Zhang discovered that even when she took initiative to track down covert influence campaigns, the teams who could take action to remove them didn’t—not without persistent “lobbying.” So Zhang tried harder. Here’s Karen Hao again:

She was called upon repeatedly to help handle emergencies and praised for her work, which she was told was valued and important.

But despite her repeated attempts to push for more resources, leadership cited different priorities. They also dismissed Zhang’s suggestions for a more sustainable solution, such as suspending or otherwise penalizing politicians who were repeat offenders. It left her to face a never-ending firehose: The manipulation networks she took down quickly came back, often only hours or days later. “It increasingly felt like I was trying to empty the ocean with a colander,” she says.28

Julia Carrie Wong’s Guardian piece reveals something interesting about Zhang’s reporting chain, which is that Meta’s Vice President of Integrity, Guy Rosen, was one of the people giving her the hardest pushback.

Remember Internet.org, also known as Free Basics, aka Meta’s push to dominate global internet use in all those countries it would go on to “deprioritize” and generally ignore?

Guy Rosen, Meta’s then-newish VP of Integrity, is the guy who previously ran Internet.org. He came to lead Integrity directly from being VP of Growth. Before getting acquihired by Meta, Rosen co-founded a company The Information describes as “a startup that analyzed what people did on their smartphones.”29

Meta bought that startup in 2013, nominally because it would help Internet.org. In a very on-the-nose development, Rosen’s company’s supposedly privacy-protecting VPN software allowed Meta to collect huge amounts of data—so much that Apple booted the app from its store.

So that’s Facebook’s VP of Integrity.

“We simply didn’t care enough to stop them”

In the Guardian, Julia Carrie Wong reports that in the fall of 2019, Zhang discovered that the Honduras network was back up, and she couldn’t get Meta’s Threat Intelligence team to deal with it. That December, she posted an internal memo about it. Rosen responded:

Facebook had “moved slower than we’d like because of prioritization” on the Honduras case, Rosen wrote. “It’s a bummer that it’s back and I’m excited to learn from this and better understand what we need to do systematically,” he added. But he also chastised her for making a public [public as in within Facebook —EK] complaint, saying: “My concern is that threads like this can undermine the people that get up in the morning and do their absolute best to try to figure out how to spend the finite time and energy we all have and put their heart and soul into it.”[31]

You can go read the MIT Technology Review piece and the Guardian piece, which have far more managerial quotes than I can fit in here, all of them hair-raising.

In a private follow-up conversation (still in December, 2019), Zhang alerted Rosen that she’d been told that the Facebook Threat Intelligence team would only prioritize fake networks affecting “the US/western Europe and foreign adversaries such as Russia/Iran/etc.”

Rosen told her that he agreed with those priorities. Zhang pushed back (my emphasis):

I get that the US/western Europe/etc is important, but for a company with effectively unlimited resources, I don’t understand why this cannot get on the roadmap for anyone … A strategic response manager told me that the world outside the US/Europe was basically like the wild west with me as the part-time dictator in my spare time. He considered that to be a positive development because to his knowledge it wasn’t covered by anyone before he learned of the work I was doing.

Rosen replied, “I wish resources were unlimited.”30

I’ll quote Wong’s next passage in full: “At the time, the company was about to report annual operating profits of $23.9bn on $70.7bn in revenue. It had $54.86bn in cash on hand.”

In early 2020, Zhang’s managers told her she was all done tracking down influence networks—it was time she got back to hunting and erasing “vanity likes” from bots instead.

But Zhang believed that if she stopped, no one else would hunt down big, potentially consequential covert influence networks. So she kept doing at least some of it, including advocating for action on an inauthentic Azerbaijan network that appeared to be connected to the country’s ruling party. In an internal group, she wrote that, “Unfortunately, Facebook has become complicit by inaction in this authoritarian crackdown.”

Although we conclusively tied this network to elements of the government in early February, and have compiled extensive evidence of its violating nature, the effective decision was made not to prioritize it, effectively turning a blind eye.”31

After those messages, Threat Intelligence decided to act on the network after all.

Then Meta fired Zhang for poor performance.

On her way out the door, Zhang posted a long exit memo—7,800 words—describing what she’d seen. Meta deleted it, so Zhang put up a password-protected version on her own website so her colleagues can see it. So Meta got Zhang’s entire website taken down and her domain deactivated. Eventually Meta got enough employee pressure that it put an edited version back up on their internal site.32

Shortly thereafter, someone leaked the memo to Buzzfeed News.

In the memo, Zhang wrote:

I’ve found multiple blatant attempts by foreign national governments to abuse our platform on vast scales to mislead their own citizenry, and caused international news on multiple occasions. I have personally made decisions that affected national presidents without oversight, and taken action to enforce against so many prominent politicians globally that I’ve lost count.33

And: “[T]he truth was, we simply didn’t care enough to stop them.”

On her final day at Meta, Zhang left notes for her colleagues, tallying suspicious accounts involved in political influence campaigns that needed to be investigated:

There were 200 suspicious accounts still boosting a politician in Bolivia, she recorded; 100 in Ecuador, 500 in Brazil, 700 in Ukraine, 1,700 in Iraq, 4,000 in India and more than 10,000 in Mexico.34

“With all due respect”

Zhang’s work at Facebook happened after the wrangling over Russian influence ops that Alex Stamos’ team found. And after the genocide in Myanmar. And after Mark Zuckerberg did his press-and-government tour about how hard Meta tried and how much better they’d do after Myanmar.35

It was an entire calendar year after the New York Times found the Tatmadaw’s genocide-fueling fake-Page hatefarm that Guy Rosen, Facebook’s VP of Integrity, told Sophie Zhang that the only coordinated fake networks Facebook would take down were the ones that affected the US, Western Europe, and “foreign adversaries.”36

In response to Zhang’s disclosures, Rosen later hopped onto Twitter to deliver his personal assessment of the networks Zhang found and couldn’t get removed:

With all due respect, what she’s described is fake likes—which we routinely remove using automated detection. Like any team in the industry or government, we prioritize stopping the most urgent and harmful threats globally. Fake likes is not one of them.

One of Frances Haugen’s disclosures includes an internal memo that summarizes Meta’s actual, non-Twitter-snark awareness of the way Facebook has been hollowed out for routine use by covert influence campaigns:

We frequently observe highly-coordinated, intentional activity on the FOAS [Family of Apps and Services] by problematic actors, including states, foreign actors, and actors with a record of criminal, violent or hateful behaviour, aimed at promoting social violence, promoting hate, exacerbating ethnic and other societal cleavages, and/or delegitimizing social institutions through misinformation. This is particularly prevalent—and problematic—in At Risk Countries and Contexts.37

So, they knew.

Because of Haugen’s disclosures, we also know that in 2020, for the category, “Remove, reduce, inform/measure misinformation on FB Apps, Includes Community Review and Matching”—so, that’s moderation targeting misinformation specifically—only 13% of the total budget went to the non-US countries that provide more than 90% of Facebook’s user base, and which include all of those At Risk Countries. The other 87% of the budget was reserved for the 10% of Facebook users who live in the United States.38

In case any of this seems disconnected with the main thread of what happened in Myanmar, here’s what (formerly Myanmar-based) researcher Victoire Rio had to say about covert coordinated influence networks in her extremely good 2020 case study about the role of social media in Myanmar’s violence:

Bad actors spend months—if not years—building networks of online assets, including accounts, pages and groups, that allow them to manipulate the conversation. These inauthentic presences continue to present a major risk in places like Myanmar and are responsible for the overwhelming majority of problematic content.39

Note that Rio says that these inauthentic networks—the exact things Sophie Zhang chased down until she got fired for it—continued to present a major risk in 2020.

It’s time to skip ahead.

Let’s go to Myanmar in 2021, four years after the peak of the genocide. After everything I’ve dealt with in this whole painfully long series so far, it would be fair to assume that Meta would be prioritizing getting everything right in Myanmar. Especially after the coup.

Meta in Myanmar, again (2021)

If you want to understand the coup and its aftermath, you might read the Council on Foreign Relations explainer, an AFAICT competent NYT backgrounder, the Crisis Group’s wonk-grade explainer, or the remarkable first-person “Chronicle of

a Coup” blog series at Tea Circle, in which a pseudonymous westerner relates his experience of life in Myanmar during and after the coup. Human Rights Watch is reporting on the junta’s abuses, which they consider crimes against humanity.

In 2021, the Tatmadaw deposed Myanmar’s democratically elected government and transferred the leadership of the country to the military’s Commander-in-Chief. Since then, the military has turned the machines of surveillance, administrative repression, torture, and murder that it refined on the Rohingya and other ethnic minorities onto Myanmar’s Buddhist ethnic Bamar majority.

Also in 2021, Facebook’s director of policy for APAC Emerging Countries, Rafael Frankel, told the Associated Press that Facebook had now “built a dedicated team of over 100 Burmese speakers.”

This “dedicated team is,” presumably, the group of contract workers employed by the Accenture-run “Project Honey Badger” team in Malaysia.40 (Which, Jesus.)

In October of 2021, the Associated Press took a look at how that’s working out on Facebook in Myanmar. Right away, they found threatening and violent posts:

One 2 1/2 minute video posted on Oct. 24 of a supporter of the military calling for violence against opposition groups has garnered over 56,000 views.

“So starting from now, we are the god of death for all (of them),” the man says in Burmese while looking into the camera. “Come tomorrow and let’s see if you are real men or gays.”

One account posts the home address of a military defector and a photo of his wife. Another post from Oct. 29 includes a photo of soldiers leading bound and blindfolded men down a dirt path. The Burmese caption reads, “Don’t catch them alive.”41

That’s where content moderation stood in 2021. What about the algorithmic side of things? Is Facebook still boosting dangerous messages in Myanmar?

In the spring of 2021, Global Witness analysts made a clean Facebook account with no history and searched for တပ်မတော်—“Tatmadaw.” They opened the top page in the results, a military fan page, and found no posts that broke Facebook’s new, stricter rules. Then they hit the “like” button, which caused a pop-up with “related pages” to appear. Then the team popped open up the first five recommended pages.

Here’s what they found:

Three of the five top page recommendations that Facebook’s algorithm suggested contained content posted after the coup that violated Facebook’s policies. One of the other pages had content that violated Facebook’s community standards but that was posted before the coup and therefore isn’t included in this article.

Specifically, they found messages that included:

- Incitement to violence

- Content that glorifies the suffering or humiliation of others

- Misinformation that can lead to physical harm42

As well as several kinds of posts that violated Facebook’s new and more specific policies on Myanmar.

So not only were the violent, violence-promoting posts still showing up in Myanmar four years later after the atrocities in Rakhine State—and after the Tatmadaw turned the full machinery of of its violence onto opposition members of Myanmar’s Buddhist ethnic majority—but Facebook was still funneling users directly into them after even the lightest engagement with anodyne pro-military content.

This is in 2021, with Meta throwing vastly more resources at the problem than it ever did during the period leading up to and including the genocide of the Rohingya people. Its algorithms are making active recommendations by Facebook, precisely as outlined in the Meta memos in Haugen’s disclosures.

By any reasonable measure, I think this is a failure.

Meta didn’t respond to requests for comment from Global Witness, but when the Guardian and AP picked up the story, Meta got back to them with…this:

Our teams continue to closely monitor the situation in Myanmar in real-time and take action on any posts, Pages or Groups that break our rules. We proactively detect 99 percent of the hate speech removed from Facebook in Myanmar, and our ban of the Tatmadaw and repeated disruption of coordinated inauthentic behavior has made it harder for people to misuse our services to spread harm.43

One more time: This statement says nothing about how much hate speech is removed. It’s pure misdirection.

Internal Meta memos highlight ways to use Facebook’s algorithmic machinery to sharply reduce the spread of what they called “high-harm misinfo.” For those potentially harmful topics, you “hard demote” (aka “push down” or “don’t show”) reshared posts that were originally made by someone who isn’t friended or followed by the viewer. (Frances Haugen talks about this in interviews as “cutting the reshare chain.”)

And this method works. In Myanmar, “reshare depth demotion” reduced “viral inflammatory prevalence” by 25% and cut “photo misinformation” almost in half.

In a reasonable world, I think Meta would have decided to broaden use of this method and work on refining it to make it even more effective. What they did, though, was decide to roll it back within Myanmar as soon as the upcoming elections were over.44

The same SEC disclosure I just cited also notes that Facebook’s AI “classifier” for Burmese hate speech didn’t seem to be maintained or in use—and that algorithmic recommendations were still shuttling people toward violent, hateful messages that violated Facebook’s Community Standards.

So that’s how the algorithms were going. How about the military’s covert influence campaign?

Reuters reported in late 2021 that:

As Myanmar’s military seeks to put down protest on the streets, a parallel battle is playing out on social media, with the junta using fake accounts to denounce opponents and press its message that it seized power to save the nation from election fraud…

The Reuters reporters explain the military has assigned thousands of the soldiers to wage “information combat” in what appears to be an expanded, distributed version of their earlier secret propaganda ops:

“Soldiers are asked to create several fake accounts and are given content segments and talking points that they have to post,” said Captain Nyi Thuta, who defected from the army to join rebel forces at the end of February. “They also monitor activity online and join (anti-coup) online groups to track them.” 45

(We know this because Reuters journalists got hold of a high-placed defector from the Tatmadaw’s propaganda wing.)

When asked for comment, Facebook’s regional Director of Public Policy told Reuters that Meta “‘proactively’ detected almost 98 percent of the hate speech removed from its platform in Myanmar.”

“Wasting our lives under tarpaulin”

The Rohingya people forced to flee Myanmar have scattered across the region, but the overwhelming majority of those who fled in 2017 ended up in the Cox’s Bazar region of Bangladesh.

The camps are beyond overcrowded, and they make everyone who lives in them vulnerable to the region’s seasonal flooding, to worsening climate impacts, and to waves of disease. This year, the refugees’ food aid was just cut from the equivalent of $12 a month to $8 month, because the international community is focused elsewhere.46

The complex geopolitical situation surrounding post-coup Myanmar—in which many western and Asian countries condemn the situation in Myanmar, but don’t act lest they push the Myanmar junta further toward China—seems likely to ensure a long, bloody conflict, with no relief in sight for the Rohingya.47

The UN estimates that more than 960,000 Rohingya refugees now live in refugee camps in Bangladesh. More than half are children, few of whom have had much education at all since coming to the camps six years ago. The UN estimates that the refugees needed about $70.5 million for education in 2022, of which 1.6% was actually funded. 48

Amnesty International spoke with Mohamed Junaid, a 23-year-old Rohingya volunteer math and chemistry teacher, who is also a refugee. He told Amnesty:

Though there were many restrictions in Myanmar, we could still do school until matriculation at least. But in the camps our children cannot do anything. We are wasting our lives under tarpaulin.49

In their report, “The Social Atrocity,” Amnesty wrote that in 2020, seven Rohingya youth organizations based in the refugee camps made a formal application to Meta’s Director of Human Rights. They requested that, given its role in the crises that led to their expulsion from Myanmar, Meta provide just one million dollars in funding to support a teacher-training initiative within the camps—a way to give the refugee children a chance at an education that might someday serve them in the outside world.

Meta got back to the Rohingya youth organizations in 2021, a year in which the company cleared $39.3B in profits:

Unfortunately, after discussing with our teams, this specific proposal is not something that we’re able to support. As I think we noted in our call, Facebook doesn’t directly engage in philanthropic activities.

In 2022, Global Witness came back for one more look at Meta’s operations in Myanmar, this time with eight examples of real hate speech aimed at the Rohingya—actual posts from the period of the genocide, all taken from the UN Human Rights Council findings I’ve been linking to so frequently in this series. They submitted these real-life examples of hate speech to Meta as Burmese-language Facebook advertisements.

Meta accepted all eight ads.50

The final post in this series, Part IV, will be up in about a week. Thank you for reading.

“Facebook Misled Investors and the Public About ‘Transparency’ Reports Boasting Proactive Removal of Over 90% of Identified Hate Speech When Internal Records Show That ‘As Little As 3-5% of Hate’ Speech Is Actually Removed,” Whistleblower Aid, undated.↩︎

“Facebook Misled Investors and the Public About ‘Transparency’ Reports Boasting Proactive Removal of Over 90% of Identified Hate Speech When Internal Records Show That ‘As Little As 3-5% of Hate’ Speech Is Actually Removed,” Whistleblower Aid, undated.↩︎

“Facebook Misled Investors and the Public About ‘Transparency’ Reports Boasting Proactive Removal of Over 90% of Identified Hate Speech When Internal Records Show That ‘As Little As 3-5% of Hate’ Speech Is Actually Removed,” Whistleblower Aid, undated. The quoted part is cited to an internal Meta document called “Demoting on Integrity Signals.”↩︎

“Facebook Misled Investors and the Public About ‘Transparency’ Reports Boasting Proactive Removal of Over 90% of Identified Hate Speech When Internal Records Show That ‘As Little As 3-5% of Hate’ Speech Is Actually Removed,” Whistleblower Aid, undated. The quoted part is cited to an internal Meta document called “A first look at the minimum integrity holdout.”↩︎

“Facebook Misled Investors and the Public About ‘Transparency’ Reports Boasting Proactive Removal of Over 90% of Identified Hate Speech When Internal Records Show That ‘As Little As 3-5% of Hate’ Speech Is Actually Removed,” Whistleblower Aid, undated. The quoted part is cited to an internal Meta document called “fghanistan Hate Speech analysis.”↩︎

“Transcript of Mark Zuckerberg’s Senate hearing,” The Washington Post (which got the transcript via Bloomberg Government), April 10, 2018.↩︎

“Transcript of Zuckerberg’s Appearance Before House Committee,” The Washington Post (which got the transcript via Bloomberg Government), April 11, 2018.↩︎

“Transcript of Zuckerberg’s Appearance Before House Committee,” The Washington Post (which got the transcript via Bloomberg Government), April 11, 2018.↩︎

“Facebook Misled Investors and the Public About ‘Transparency’ Reports Boasting Proactive Removal of Over 90% of Identified Hate Speech When Internal Records Show That ‘As Little As 3-5% of Hate’ Speech Is Actually Removed,” Whistleblower Aid, undated.↩︎

“Facebook Misled Investors and the Public About Its Role Perpetuating Misinformation and Violent Extremism Relating to the 2020 Election and January 6th Insurrection,” Whistleblower Aid, undated; “Facebook Wrestles With the Features It Used to Define Social Networking,” The New York Times, Oct. 25, 2021. This memo hasn’t been made public even in a redacted form, which is frustrating, but the SEC disclosure and NYT article cited here both contain overlapping but not redundant excerpts from which I was able to reconstruct this slightly longer quote.↩︎

“Facebook and responsibility,” internal Facebook memo, authorship redacted, March 9, 2020, archived at Document Cloud as a series of images.↩︎

“Facebook misled investors and the public about its role perpetuating misinformation and violent extremism relating to the 2020 election and January 6th insurrection,” Whistleblower Aid, undated. (Date of the quoted internal memo comes from The Atlantic.)↩︎

“Facebook Misled Investors and the Public About Its Role Perpetuating Misinformation and Violent Extremism Relating to the 2020 Election and January 6th Insurrection,” Whistleblower Aid, undated.↩︎

“Facebook and the Rohingya Crisis,” Myanmar Internet Project, September 29, 2022. This document is offline right now at the Myanmar Internet Project site, so I’ve used Document Cloud to archive a copy of a PDF version a project affiliate provided.↩︎

Full interview with Alex Stamos filmed for The Facebook Dilemma, Frontline, October, 2018.↩︎

“A Genocide Incited on Facebook, With Posts From Myanmar’s Military,” Paul Mozur, The New York Times, October 15, 2018.↩︎

“A Genocide Incited on Facebook, With Posts From Myanmar’s Military,” Paul Mozur, The New York Times, October 15, 2018; “Removing Myanmar Military Officials From Facebook,” Meta takedown notice, August 28, 2018.↩︎

“A Genocide Incited on Facebook, With Posts From Myanmar’s Military,” Paul Mozur, The New York Times, October 15, 2018.↩︎

“The Role of Social Media in Fomenting Violence: Myanmar,” Victoire Rio, Policy Brief No. 78, Toda Peace Institute, June 2020.↩︎

“Genocide: A Sociological Perspective,” Helen Fein, Current Sociology, Vol.38, No.1 (Spring 1990), p. 1-126; republished in Genocide: An Anthropological Reader, ed. Alexander Laban Hinton, Blackwell Publishers, 2002, and this quotation appears on p. 84 of that edition.↩︎

Genocide: A Comprehensive Introduction, Adam Jones, Routledge, 2006, p. 267.↩︎

An Ugly Truth: Inside Facebook’s Battle for Domination, Sheera Frenkel and Cecilia Kang, HarperCollins, July 13, 2021.↩︎

An Ugly Truth: Inside Facebook’s Battle for Domination, Sheera Frenkel and Cecilia Kang, HarperCollins, July 13, 2021.↩︎

“Read Mark Zuckerberg’s full remarks on Russian ads that impacted the 2016 elections,” CNBC News, September 21, 2017.↩︎

“She Risked Everything to Expose Facebook. Now She’s Telling Her Story,” Karen Hao, MIT Technology Review, July 29, 2021.↩︎

“How Facebook Let Fake Engagement Distort Global Politics: A Whistleblower’s Account,” Julia Carrie Wong, The Guardian, April 12, 2021.↩︎

“How Facebook Let Fake Engagement Distort Global Politics: A Whistleblower’s Account,” Julia Carrie Wong, The Guardian, April 12, 2021.↩︎

“She Risked Everything to Expose Facebook. Now She’s Telling Her Story,” Karen Hao, MIT Technology Review, July 29, 2021.↩︎

“The Guy at the Center of Facebook’s Misinformation Mess,” Sylvia Varnham O’Regan, The Information, June 18, 2021.↩︎

“How Facebook Let Fake Engagement Distort Global Politics: A Whistleblower’s Account,” Julia Carrie Wong, The Guardian, April 12, 2021.↩︎

“How Facebook Let Fake Engagement Distort Global Politics: A Whistleblower’s Account,” Julia Carrie Wong, The Guardian, April 12, 2021.↩︎

“She Risked Everything to Expose Facebook. Now She’s Telling Her Story,” Karen Hao, MIT Technology Review, July 29, 2021.↩︎

“I Have Blood on My Hands”: A Whistleblower Says Facebook Ignored Global Political Manipulation, Craig Silverman, Ryan Mac, Pranav Dixit, BuzzFeed News, September 14, 2020.↩︎

“How Facebook Let Fake Engagement Distort Global Politics: A Whistleblower’s Account,” Julia Carrie Wong, The Guardian, April 12, 2021.↩︎

“The Role of Social Media in Fomenting Violence: Myanmar,” Victoire Rio, Policy Brief No. 78, Toda Peace Institute, June 2020.↩︎

“How Facebook Let Fake Engagement Distort Global Politics: A Whistleblower’s Account,” Julia Carrie Wong, The Guardian, April 12, 2021.↩︎

“Facebook Misled Investors and the Public About Bringing ‘the World Closer Together’ Where It Relegates International Users and Promotes Global Division and Ethnic Violence,” Whistleblower Aid, undated. This is a single-source statement, but it’s a budget figure, not an opinion, so I’ve used it.↩︎

“Facebook Misled Investors and the Public About Bringing ‘the World Closer Together’ Where It Relegates International Users and Promotes Global Division and Ethnic Violence,” Whistleblower Aid, undated. This is a single-source statement, but it’s a budget figure, not an opinion, so I’ve used it.↩︎

“The Role of Social Media in Fomenting Violence: Myanmar,” Victoire Rio, Policy Brief No. 78, Toda Peace Institute, June 2020.↩︎

“Zuckerberg Was Called Out Over Myanmar Violence. Here’s His Apology.” Kevin Roose and Paul Mozur, The New York Times, April 9, 2018.↩︎

“Hate Speech in Myanmar Continues to Thrive on Facebook, Sam McNeil, Victoria Milko, The Associated Press, November 17, 2021.↩︎

“Algorithm of Harm: Facebook Amplified Myanmar Military Propaganda Following Coup,” Global Witness, June 23, 2021.↩︎

“Algorithm of Harm: Facebook Amplified Myanmar Military Propaganda Following Coup,” Global Witness, June 23, 2021.↩︎

“Facebook Misled Investors and the Public About Bringing ‘the World Closer Together’ Where It Relegates International Users and Promotes Global Division and Ethnic Violence,” Whistleblower Aid, undated.↩︎

“‘Information Combat’: Inside the Fight for Myanmar’s soul,” Fanny Potkin, Wa Lone, Reuters, November 1, 2021.↩︎

“Rohingya Refugees Face Hunger and Loss of Hope After Latest Ration Cuts,” Christine Pirovolakis, UNHCR, the UN Refugee Agency, July 19, 2023.↩︎

“Is Myanmar the Frontline of a New Cold War?,” Ye Myo Hein and Lucas Myers, Foreign Affairs, June 19, 2023.↩︎

“The Social Atrocity: Meta and the Right to Remedy for the Rohingya,” Amnesty International, September 29, 2022; the education funding estimates come from “Bangladesh: Rohingya Refugee Crisis Joint Response Plan 2022,” OCHA Financial Tracking Service, 2022, cited by Amnesty.↩︎

“Facebook Approves Adverts Containing Hate Speech Inciting Violence and Genocide Against the Rohingya,” March 20, 2022.↩︎

“Facebook Misled Investors and the Public About Bringing ‘the World Closer Together’ Where It Relegates International Users and Promotes Global Division and Ethnic Violence,” Whistleblower Aid, undated.↩︎

Paulo Uccello’s

Paulo Uccello’s  (Yay)

(Yay) (Oh no)

(Oh no) Behold Ruffilus the illuminator and his…colleagues.

Behold Ruffilus the illuminator and his…colleagues. (shark noises)

(shark noises) (CTP logo, designed very rapidly by

(CTP logo, designed very rapidly by  The fediverse governance processes of moderation, server leadership, and federated diplomacy. (Courtesy

The fediverse governance processes of moderation, server leadership, and federated diplomacy. (Courtesy  (A tiny piece of Jo Nakashima’s excellent

(A tiny piece of Jo Nakashima’s excellent  Screenshot from the film, Wandering: A Rohingya Story, showing a Rohingya mother smoothing her hands over her daugher's laughing face.

Screenshot from the film, Wandering: A Rohingya Story, showing a Rohingya mother smoothing her hands over her daugher's laughing face.

Screencap of an internal Meta document titled Facebook and responsibility, with a header image of the Bart Simson writing on the chalkboard meme in which the writing on the board reads, Facebook is responsible for ranking and recommendations!

Screencap of an internal Meta document titled Facebook and responsibility, with a header image of the Bart Simson writing on the chalkboard meme in which the writing on the board reads, Facebook is responsible for ranking and recommendations!

Panzagar campaign illustration depicting a Burmese girl with thanaka on her cheek and illustrated flowers coming from her mouth, flowing toward speech bubbles labeled with the names of Burmese towns and cities.

Panzagar campaign illustration depicting a Burmese girl with thanaka on her cheek and illustrated flowers coming from her mouth, flowing toward speech bubbles labeled with the names of Burmese towns and cities.

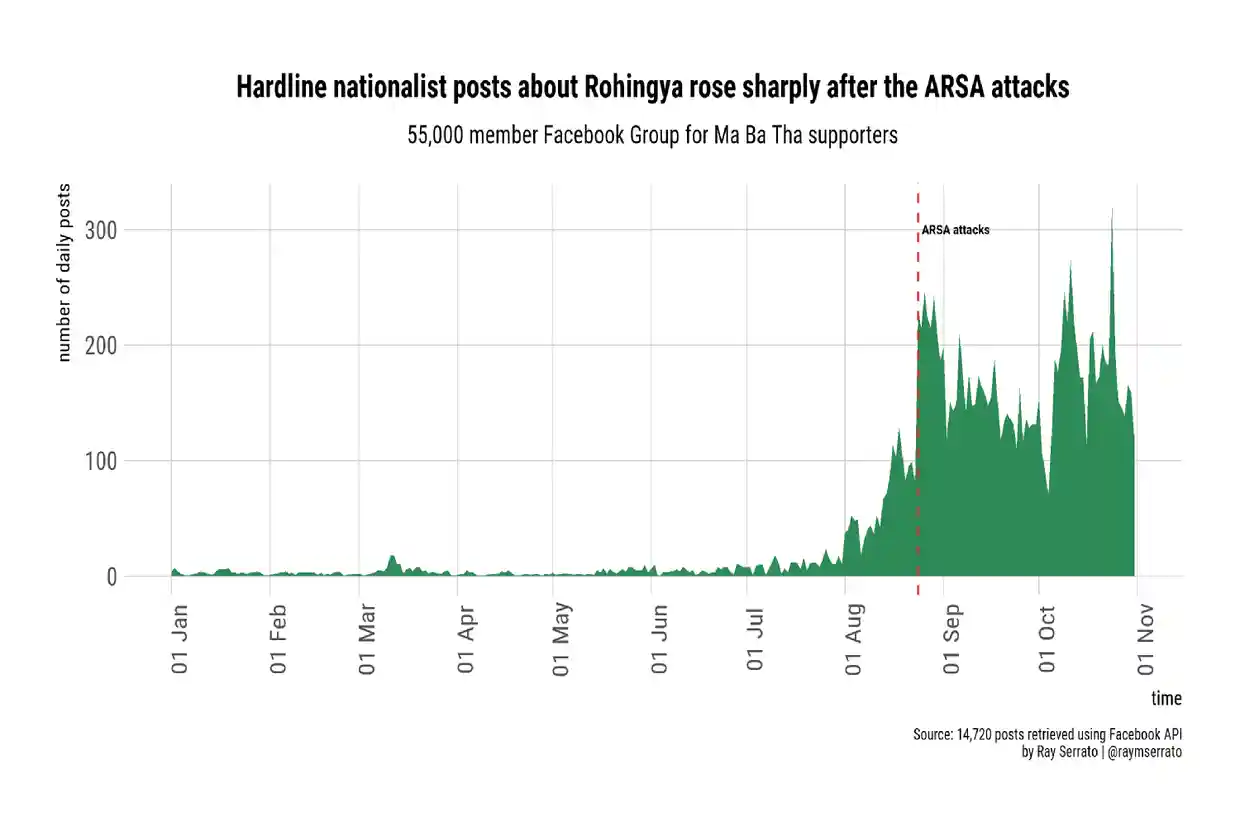

Ray Serrato's graph of Facebook posts in the extremist Group, showing enormous spikes followed by long-term elevation of posting rates beginning in August, 2016.

Ray Serrato's graph of Facebook posts in the extremist Group, showing enormous spikes followed by long-term elevation of posting rates beginning in August, 2016.

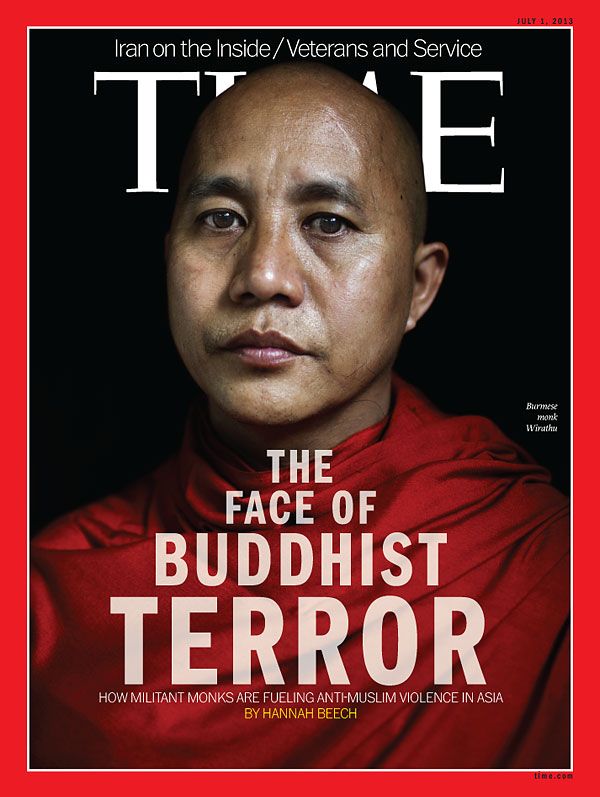

Cover of Time's international editions for July 1, 2013. The photo shows Ashin Wirathu, a Burmese man wearing the crimson robe of a Buddhist monk. His head is shaved and his expression is thoughtful. The cover text reads The Face of Buddhist Terror, in progressively large type, and underneath, How militant monks are fueling anti-Muslim violence in Asia, by Hannah Beech.

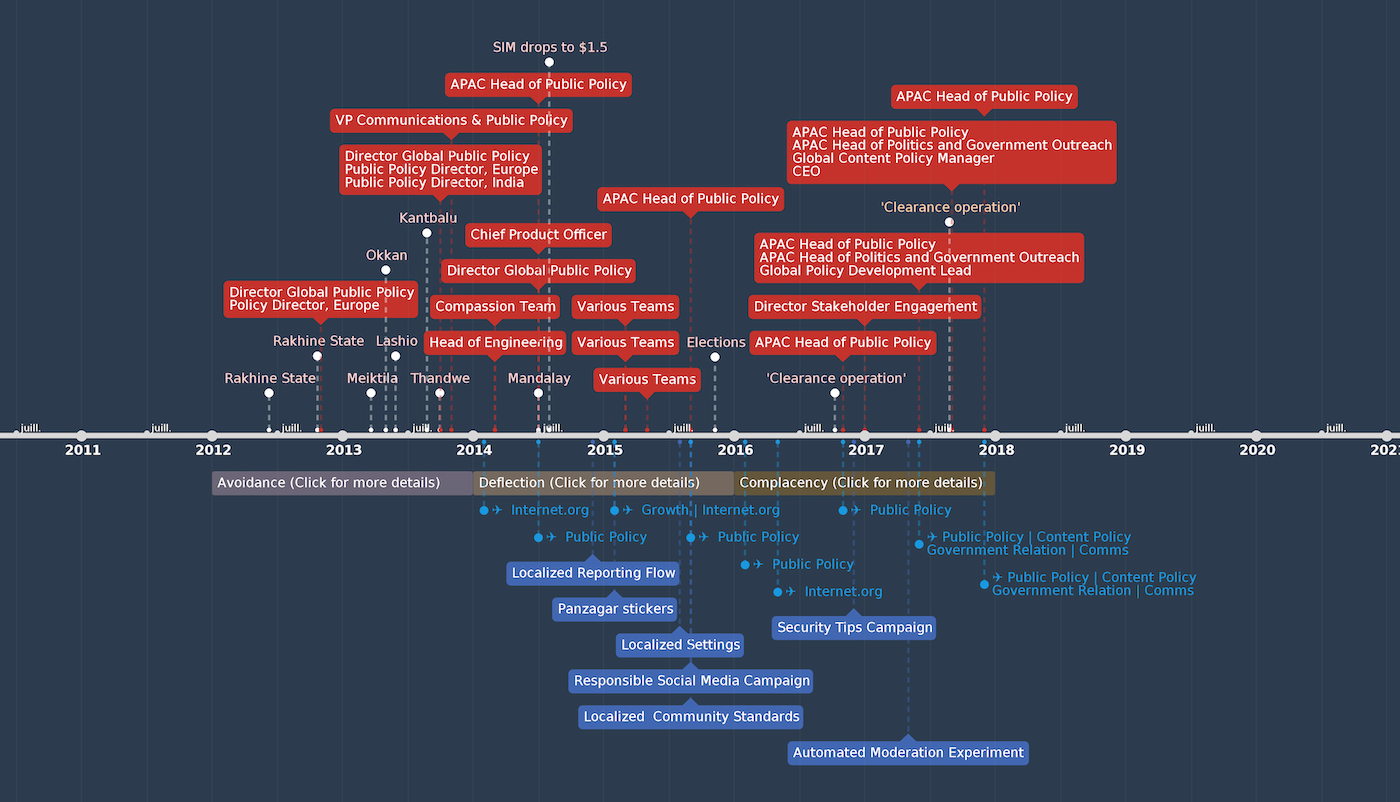

Cover of Time's international editions for July 1, 2013. The photo shows Ashin Wirathu, a Burmese man wearing the crimson robe of a Buddhist monk. His head is shaved and his expression is thoughtful. The cover text reads The Face of Buddhist Terror, in progressively large type, and underneath, How militant monks are fueling anti-Muslim violence in Asia, by Hannah Beech. The timeline showing Burmese civil-society and digital-rights leaders' attempts to warn Meta about what it was enabling in Myanmar.

The timeline showing Burmese civil-society and digital-rights leaders' attempts to warn Meta about what it was enabling in Myanmar.