Show full content

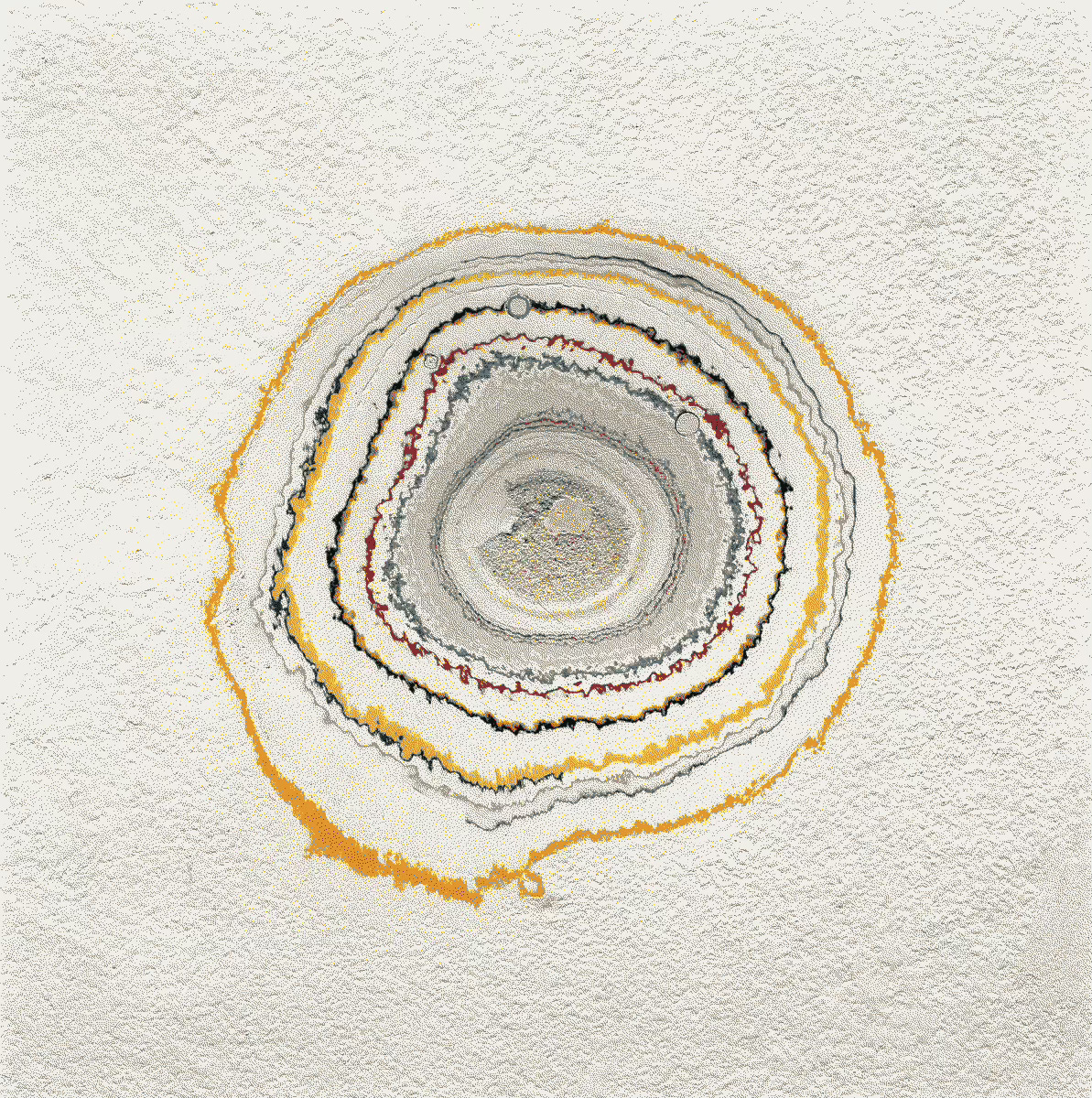

Last week I drove up to San Francisco with some friends for the Game Developer’s Conference (a massive, and frankly terrifying, video game industry gathering). Along the way we stopped to juice up the Volkswagen, and, killing the long half-hour it takes to replenish an electric car, kicked around a Western-themed truck stop. Its entertainments included not one but three animatronic shooting galleries, a “farmtique” selling salvaged road-signs and hand-painted Cheugiana, and a truckbed-sized cross-section of ancient downed sequoia, varnished to a coffee table shine.

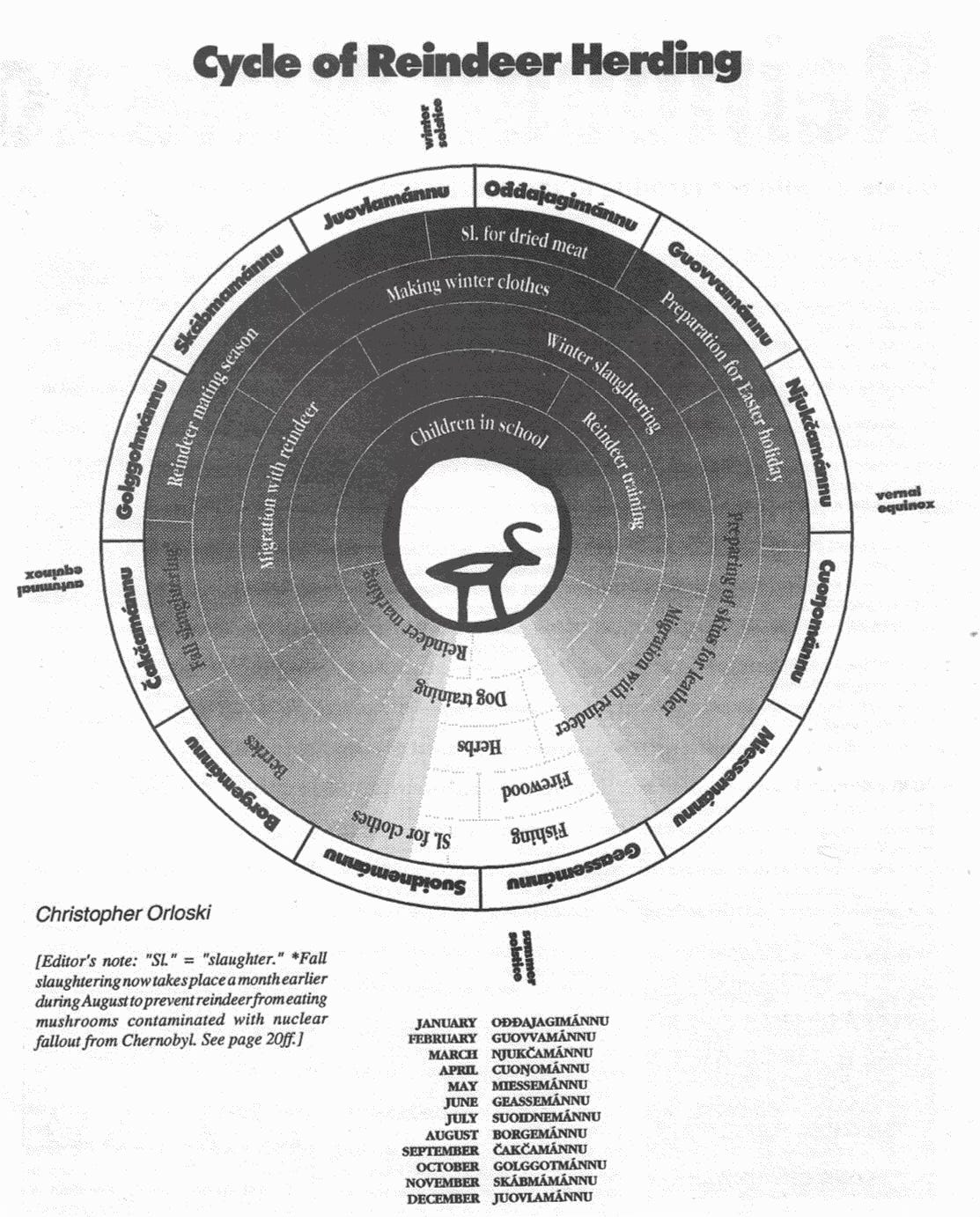

Fitting with the unspecified but palpable Biblical mood of the place, the tree’s rings were marked with the birth of Jesus, the fall of the Roman Empire, a long, unremarked-upon Dark Ages, American Independence and the death of the tree itself.

1776 AD: American Independence

1952 AD: Tree Cut Down

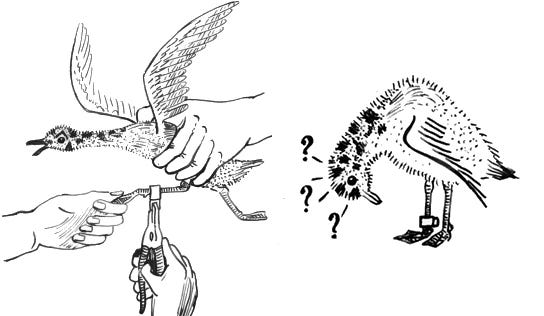

I liked that. According to the Bravoland truck stop in Kettleman City, California, the death of this tree was as important a historical event as the birth of Jesus or the founding of the republic on which it stood. These kinds of displays are common in Northern California; since the early 19th century, the ancients have been flayed, hollowed, and sliced for public consumption. If we’re to believe the markings on their terminal rings, the timeline of human history ends with the tip of the lumberman’s axe.

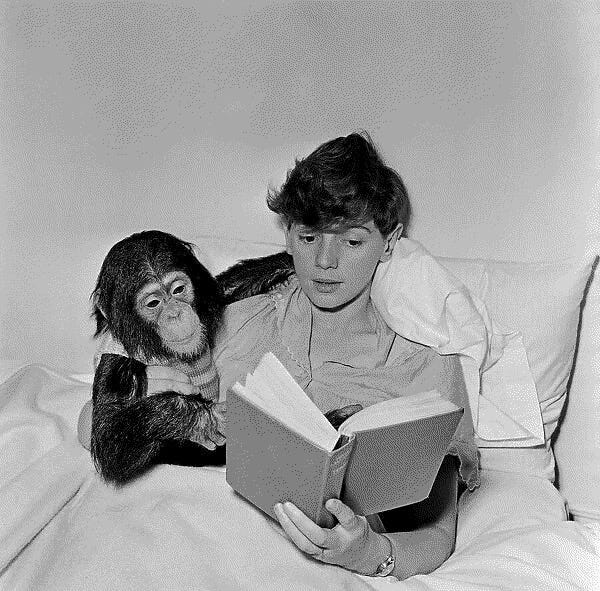

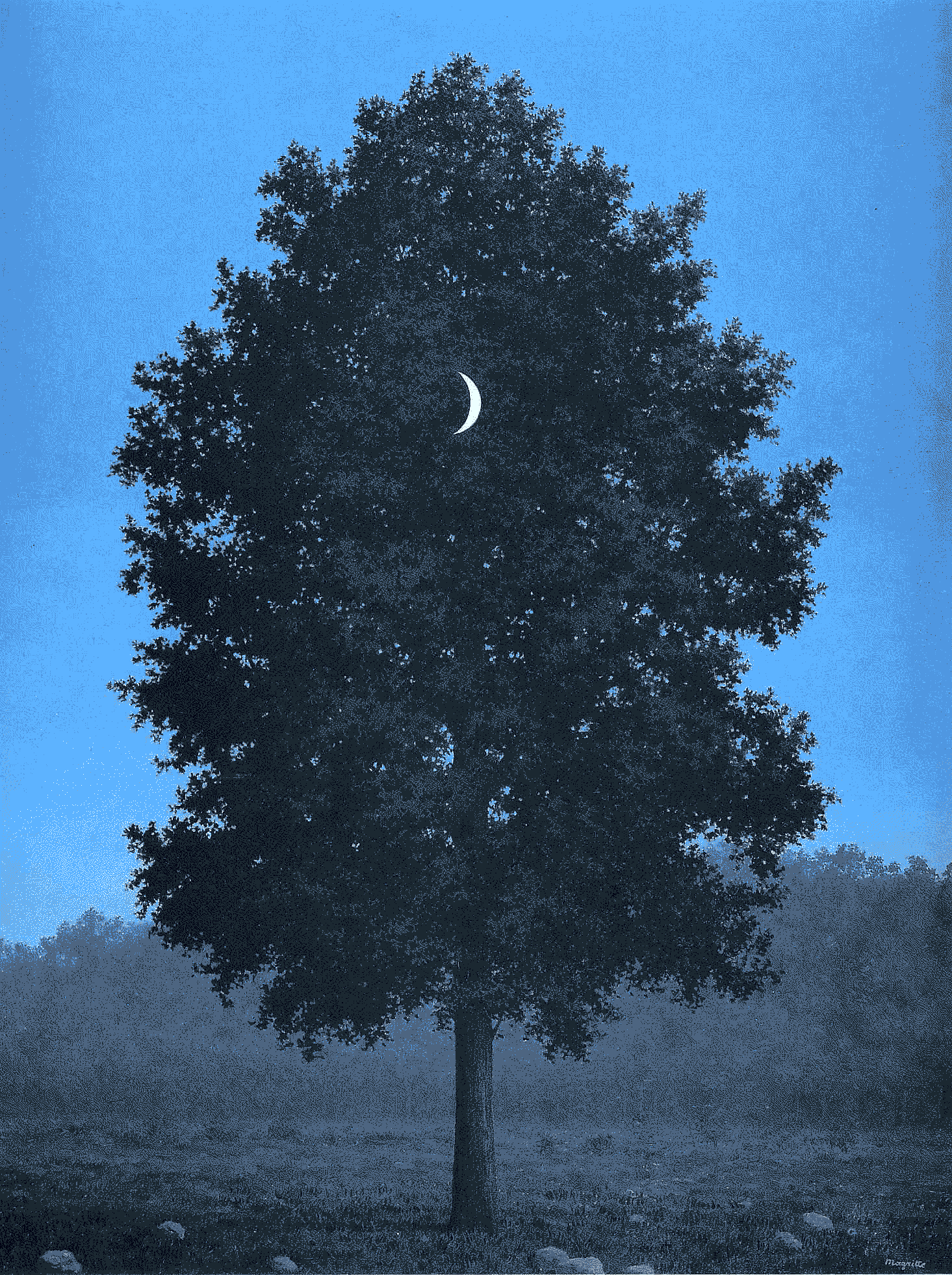

I’ve written before about how difficult it is to see a tree—to synchronize with arboreal time from ground level. The maximum lifespan of a sequoia is 3,500 years. It’s understandable to want to annotate that time with more knowable markers (and we do something similar with size: the Tall Tree in Humboldt County, for example, is 70 feet taller than the Statue of Liberty). But this sequoia wasn’t present for the birth of Jesus or the first musket-shots of the American Revolutionary War. It was just alive at the same time. An indirect form of bearing witness. If people had rings, in my innards there would be pins marking “Invention of the World Wide Web” or “Gulf War.” Instead my cells have turned over a thousand times and all I have are my memories.

My favorite book about California is Jared Farmer’s magisterial Trees in Paradise, which examines the state through the lens of redwood, eucalyptus, orange, and palm trees (although real heads know the palm is taxonomically a grass). Farmer argues that the ancient redwood and sequoia groves of Northern California provided an appealing “landscape of antiquity” to white Protestant colonizers. “For a young nation insecure about its cultural position relative to Europe,” he writes, “natural scenery offered something vaguely compensatory if not commensurate with ruins, myths, and epics.”

Unable (or unwilling) to engage with the existing myths and epics of the Indigenous people of the Sierras, 19th century Americans instead benchmarked the ancientness of the indigenous trees against Homer, Aristotle, Copernicus, Jesus. And then they cut them all down. They burdened trees with the weight of history and then, so divested, declared that history over, sawing it off at the roots. From what remained, they built a “new” world. Here it is. Slices of the ancient world persist, but they’re shellacked and mounted to the wall, witnesses to our dark age and a reminder of the time destroyed.

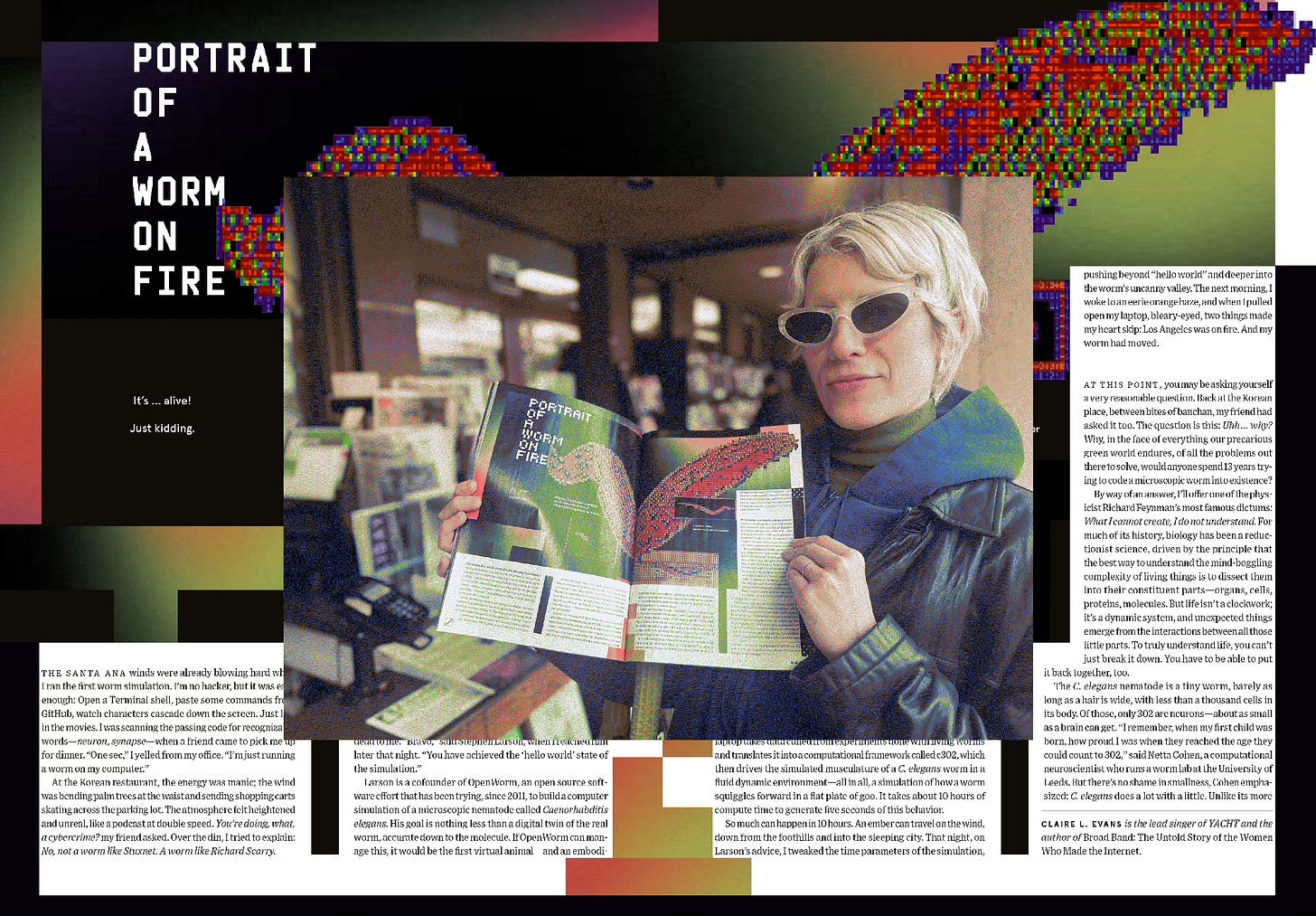

Back in LA, we went to a lecture about the death of images. In it the graphic designer David Rudnick made an argument (more elegant than my recollection of it) that images have historically held a shared narrative value, and that in an era of high-speed, bespoke image generation, these slower shared narratives will erode, and with them the image’s role as a basic unit of culture. Rudnick spoke derisively about images intended for an audience of one—those solipsistic products of the prompt. In the past, images seen by only one person were a sign of madness. I don’t disagree, although it had me thinking about what more steadfast shared referents might be.

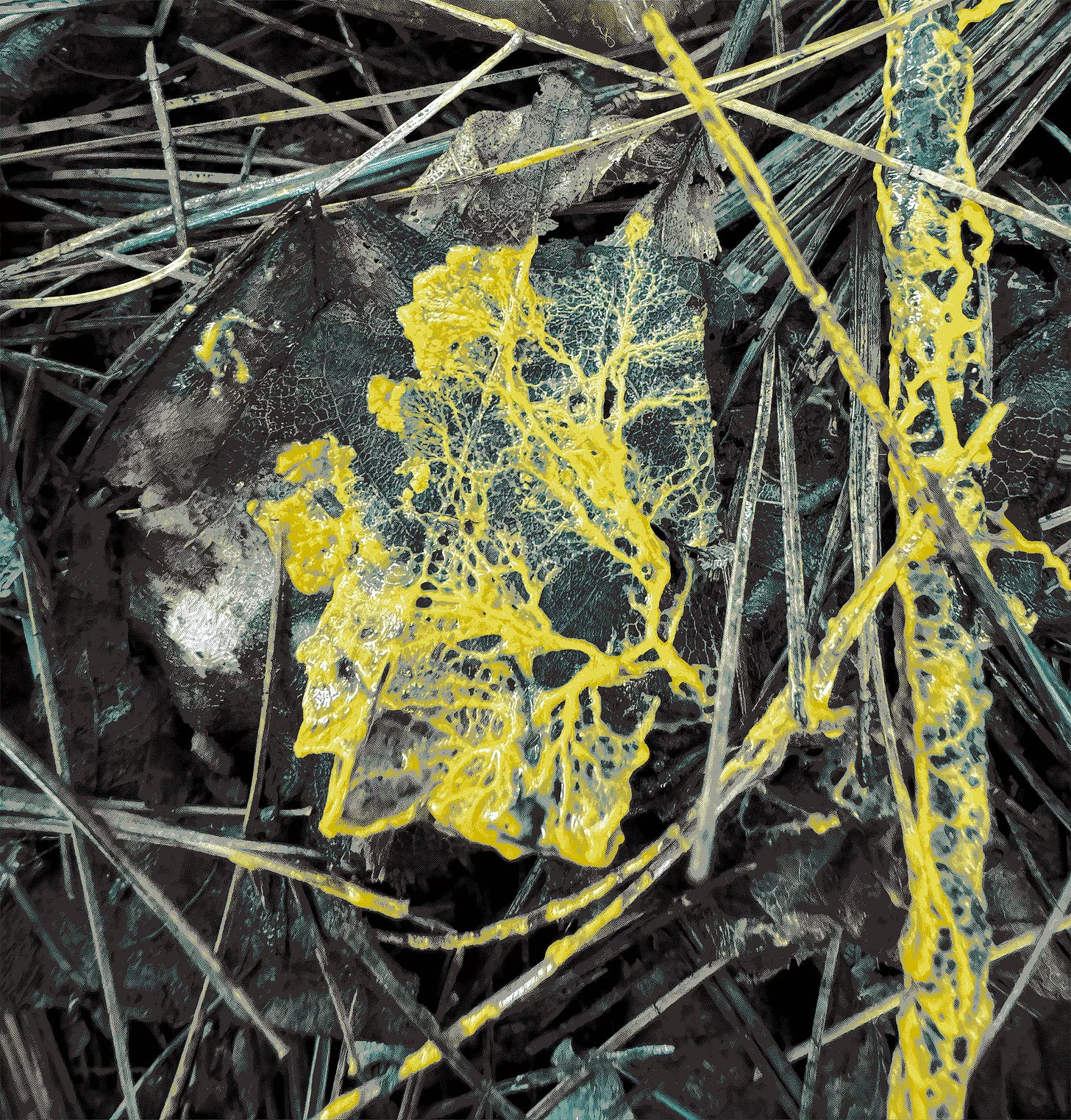

There are those lasting mental images we experience alone but together, like migraine phenomena, shared hallucinations, or the symbology of dreams (admittedly, all their own signs of madness). Or perhaps material can be a referent. The wood that was once a tree; the microchip that was once sand. The tree that’s still standing. Maybe we can build something like culture from a shared knowledge of the origins of things and their fates. Maybe that’s where culture began. After all, a tree receives as much meaning as it imbues. Like an image, it’s a carrier for history. This makes the woodcutter’s crime a crime against narrative as much as it is a crime against nature.

As Laura Tripaldi observes in her excellent book Parallel Minds, our conception of prehistory as an age of jagged flint and stone is a consequence of the relative permanence of those materials. Because rocks last, we assume they’re all there ever was, ignoring the really transformative technologies of early human culture, which have long since returned to the Earth: the proto-software of textile weaving, the advanced chemistry of fermentation and pigment. This material bias extends forwards in time as well. We assume the machinations of silicon and rare Earth minerals to be eternal. But it’s the soft technologies that have persisted, and will persist.

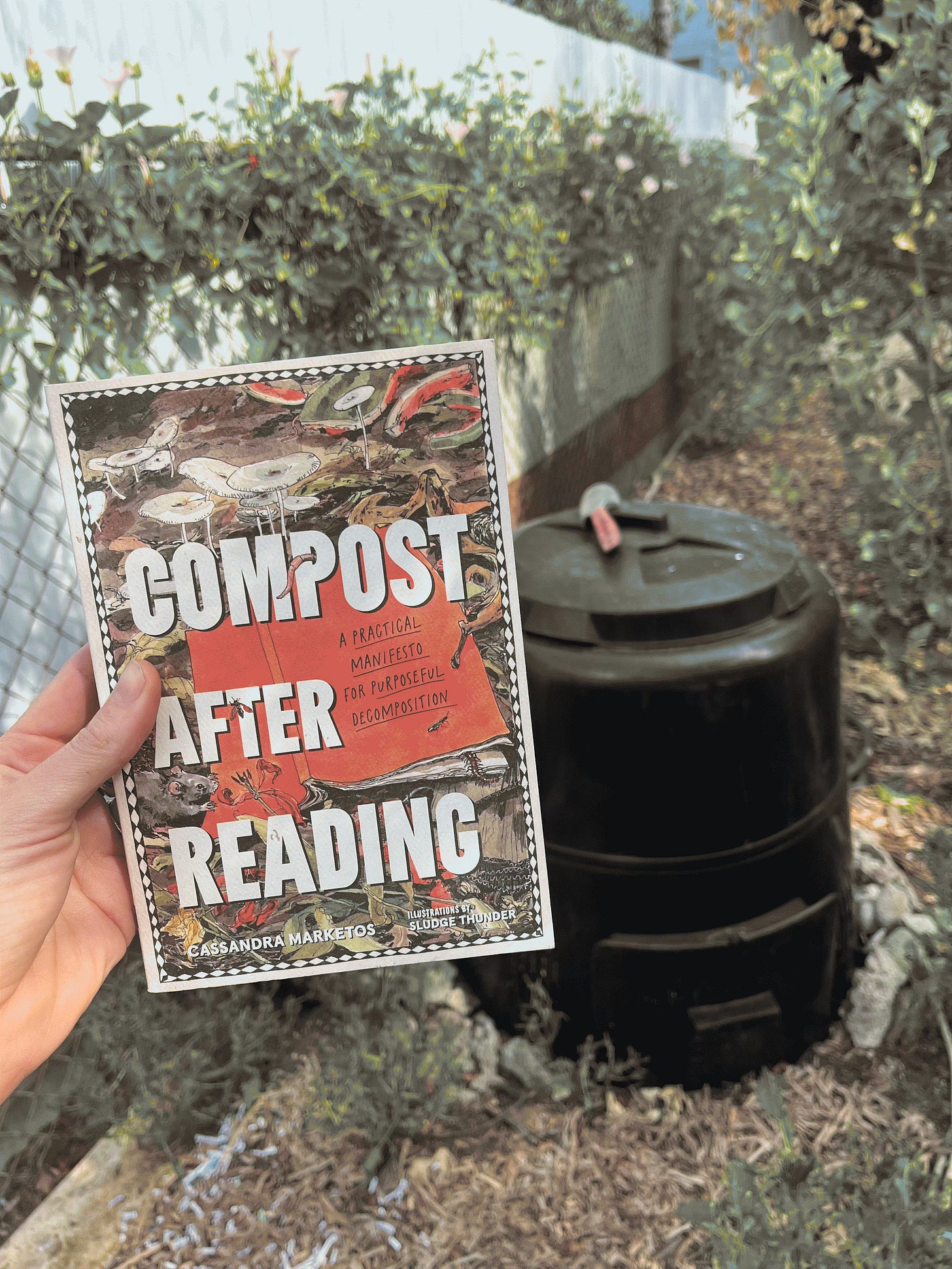

Speaking of soft technologies and the power of the biodegradable, my friend Cass Marketos, an artist and community composter, has just published a manifesto and how-to guide called Compost This Book. Cass has an expansive practice. As an artist, she’s composted memories and ideas; as a composter, she is practical, improvisatory, and welcoming. Her hands are always dirty with good Earth. If you like my work, you will love hers. She also writes an excellent newsletter called The Rot.

A bit of news:

We went to the video game conference because Blippo+ was up for four IGF Awards: the second-most nominated game of the year! We didn’t win, but no matter. That our rogue media experiment received the highest recognition in the industry feels like a triumph in itself. Blippo+ is available to play on Nintendo Switch, PC, and now Mac.

I’m also tickled to say that Blippo+ won the Herman Melville Award for Best Writing at the New York Game Awards earlier this year. I’ve never written a video game before!

A few upcoming events of note:

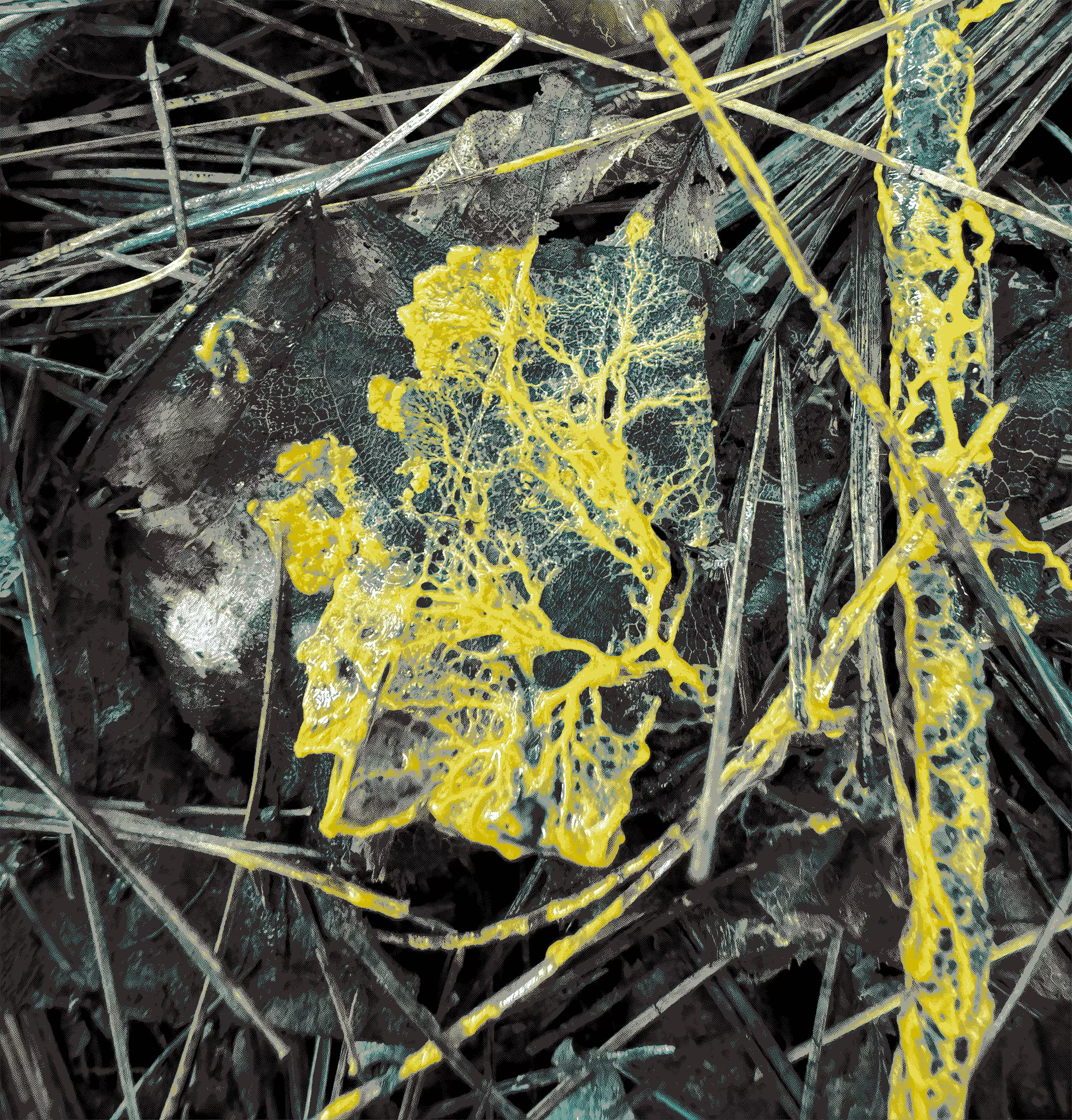

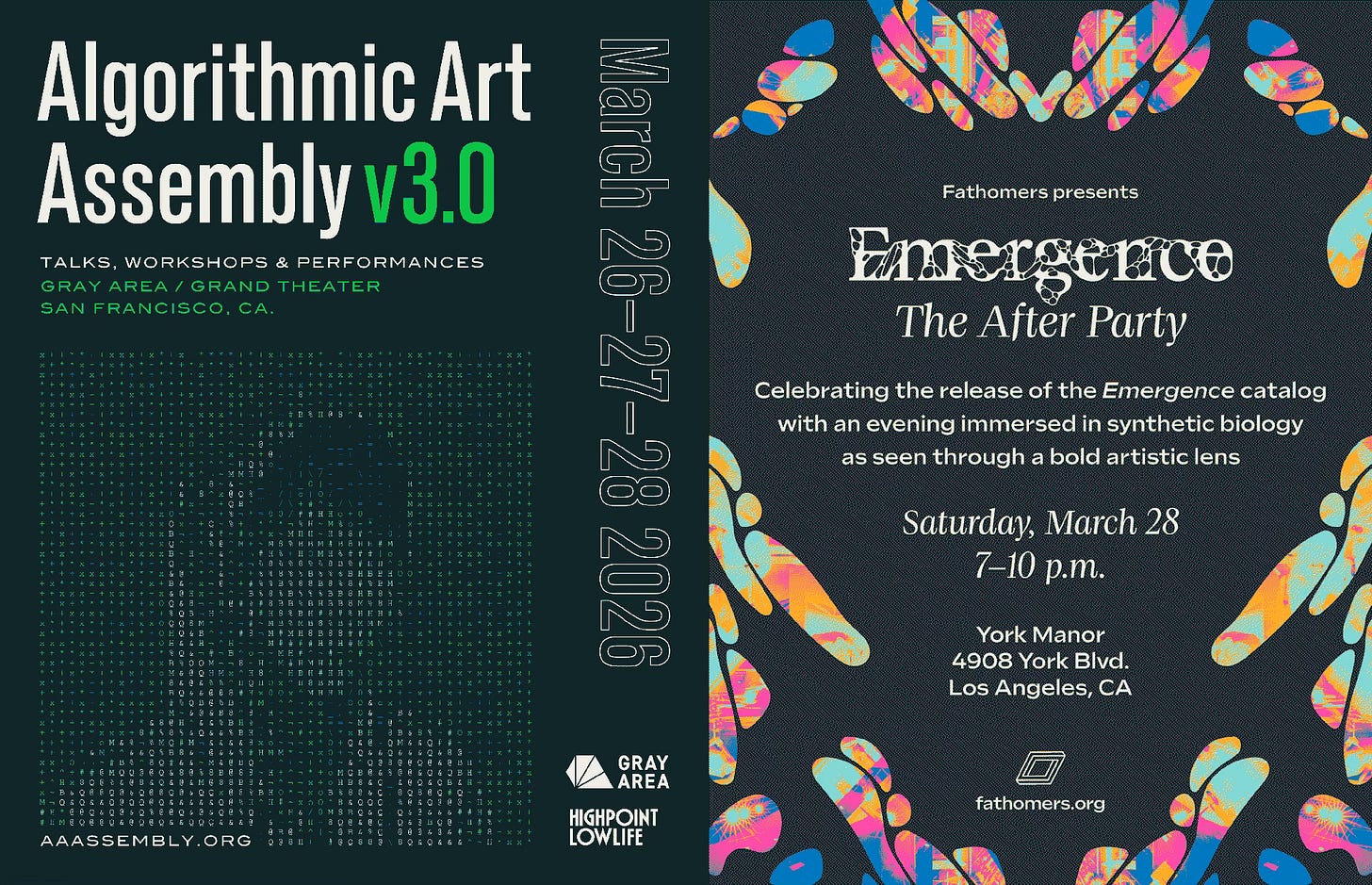

March 27: I’ll be back in San Francisco next week for Gray Area’s annual Algorithmic Art Assembly. I’m giving a talk on brainless cognition—likely the least algorithmic subject on offer that weekend. In related news, a friend told me that folks in the know are now referring to “the intersection of art and technology” as just The Intersection.

March 28: On March 28th, there will a party in Los Angeles to celebrate the publication of the catalogue for Emergence, a 2024 Fathomers exhibition exploring The Intersection of art and synthetic biology (I wrote an essay about one of the pieces in the show, a human tear gland “organoid” that cried tiny tears throughout the opening). It’ll be a science rager, with a chanting astrophysicist, a “Petri DJ,” and a dance performance about collapsed stars. I’ve been invited to end the evening with a biology-inspired DJ set, so expect goopy tunes and Barbara McClintock soundbites. If you’d like to come, enter the code AFTERLIFE50 for half-off tickets.

xo

Claire