Show full content

This newsletter is free to read, and it’ll stay that way. But if you want more - extra posts each month, no sponsored CTAs, access to the community, and a direct line to ask me things - paid subscriptions are $2.50/month. A lot of people have told me it’s worth it.

UpgradeIn February 1637, a single tulip bulb in Haarlem sold for 5,200 guilders - the price of a canal house on the Keizersgracht // ten times the annual salary of a skilled craftsman. The bulb was a Semper Augustus, streaked white and flame-red, and the buyer never saw it. He bought a piece of paper representing a future flower, and within 3 weeks, the market collapsed. Men who had mortgaged their workshops to buy futures on something they would never hold went home to explain it to their families. Within a century, the Dutch and English were back, inside the South Sea Company, whose directors had printed prospectuses for an undertaking they refused to describe. Within two centuries, French investors were holding the worthless scrip of John Law's Mississippi Scheme, which had promised them a share of Louisiana gold that didn't exist. By the 1840s it was railway shares, with one in ten English investors buying into lines that were never built. By the 1920s it was radio stocks. By the late 1990s it was dot-com. By 2008 it was tranched American mortgages, rated AAA by people paid by the banks that issued them. By 2021 it was JPEGs of monkeys. By 2026, it was AI stocks.

Every one of these episodes was preceded by someone writing a book about how the last one could never happen again, and every single one ended with the same sentence murmured like a prayer on the way up:

This time is different.

…But it never is.

Is it?

The claim I want to make…Humans do near-identical things, over and over again, across history. And we do it because our cognitive equipment hasn't changed - the brain running a 21st-century civilization is a Paleolithic brain, shaped by 200,000 years on the savannah and another 10,000 years in small agricultural settlements, and it fears the same things our ancestors feared, and it wants the same things they wanted, and it fails in the same ways.

The loop itself is, in fact, our operating system.

Everything else, the political systems, the technologies, the languages, the ideologies, is the application layer. Applications change, but the operating system doesn't. When an application throws the same error message in Rome, in Berlin in 1933, in Phnom Penh in 1975, and on a Saturday afternoon in a suburban American town in 2024, the error sits in the kernel - and the kernel is not getting patched.

The bubbleThe financial bubble (and by that I mean every financial bubble) is the cleanest version of the loop there is. Prices rise, greed overrides caution, debt piles on debt, and the floor gives way. Within ten years the same people, or their children, do it again. And again. And again.

Every bubble is catalogued and studied before the next one begins. Charles Mackay wrote Memoirs of Extraordinary Popular Delusions and the Madness of Crowds in 1841. The book became a bestseller among the same London financiers who would soon be pouring money into Latin American mining schemes that required them to invest in countries they couldn't find on a map. In 1929, Irving Fisher, one of the most published economists in America, declared that stocks had reached a permanently high plateau - and the crash began nine days later. In 2005, Alan Greenspan testified to Congress that American housing prices reflected local conditions and there was no nationwide bubble. In 2008, there was. The brain has a failure mode around probabilistic risk: it discounts low-probability catastrophic outcomes in favor of high-probability mild gains, it reads social consensus as information, and its dopamine circuit rewards the anticipation of gain more reliably than the gain itself.

The hunt feels better than the meal.

Humans pretty reliably miscalculate risk at every step of the process, but somehow the profession of finance is built on the assumption that markets aggregate these miscalculations into wisdom. They don't. They aggregate them into stampedes, and herd cognition does the rest. When everyone around you is buying, the cost of not buying is financial + social. You miss the gain, and your neighbor gets rich, and your brother-in-law mentions it at dinner. The brain treats this as a threat to status, and status, in primate terms, is survival. Solomon Asch's conformity experiments in 1951 showed that ordinary people will deny the evidence of their own eyes rather than disagree with a confident group, and bubbles are Asch experiments with money on the line.

Every bubble ends with the same discovery, which is that the asset was never worth what it traded for; every bubble starts, though, from the matching belief that this time, it is.

The strongmanThe strongman arrives on schedule, and the preconditions are consistent. A frightened middle class + institutions that have stopped delivering + an establishment that has lost the trust of the people it governs. Put those pieces in a room together and within a decade someone walks in who promises to cut through all of it. Caesar in 49 BCE. Napoleon in 1799. Mussolini in 1922. Hitler in 1933. Perón in 1946. A catalogue since then that hardly needs naming. The strongman is a phenotype; he's what the interaction between primate dominance hierarchies and political instability produces. Chimpanzee troops have alpha males, and human societies have them too. Under stable conditions, the alpha position is distributed across institutions, softened by law, and rotated by elections. Under unstable conditions, the position re-concentrates around a single body. Frans de Waal watched the same sequence play out among captive chimpanzees at Arnhem; Hannah Arendt watched it play out among human beings in the twentieth century. The mechanics were the same. The stakes differed only in body count.

Apparently, the human brain under stress doesn't want deliberation; it wants authority. Uncertainty burns more energy than bad news, and so the prefrontal cortex tries to resolve ambiguity, and when it fails, it hands control to older circuits that prefer a simple answer to the right answer - any right answer. MRI studies of people presented with ambiguous political images show amygdala activation patterns close to indistinguishable from fear responses; the feeling of not knowing whether your world is safe is, in brain-chemistry terms, very close to the feeling of being in danger.

And, sooner or later, there will always someone willing to supply the simple answer. The man who says he alone can fix it believes it, because the crowd that believes it first has already told him so; what his opponents call a lie, he experiences as a revelation. The feedback loop between a frightened population and a would-be strongman runs on the same neurology in both directions: he needs them as much as they need him, and they produce each other.

The cycle tends to run thirty years from collapse to collapse. Long enough for the generation that lived through the last strongman to die, and short enough that their grandchildren are available // ready // willing to repeat the experiment.

The scapegoatWhen a society is in pain, it finds someone to blame. Rarely the structure. Rarely the people who benefit most from the structure. Always someone weaker, someone already marginal, someone who can be sacrificed without the majority feeling the cost. Jews in medieval Europe during the Black Death, when entire communities were burned alive on the accusation that they had poisoned wells, and Jews again in Weimar Germany during the hyperinflation. Catholics in Elizabethan England, hunted by priest-catchers who were paid by the head, and Chinese merchants in Indonesia in 1965, and again in 1998. Tutsis in Rwanda in 1994, 800,000 dead in a hundred days, killed with machetes by neighbors who had lived next door for generations. Muslims in post-9/11 America. Immigrants, always, everywhere.

The mechanism was described by René Girard, a French literary critic who argued that violence against the innocent is the engine of social cohesion. His book Violence and the Sacred in 1972 laid out the structure: a community in conflict with itself discovers that it can reconcile by turning collectively on a single victim, and all that the victim has to be is unanimous. Guilt is beside the point, which is the part of this I find hardest to sit with; once the blow lands and the crowd goes quiet, the community feels cleansed. Girard's work sits uncomfortably among the more respectable social sciences because it says something his colleagues didn't want to hear: the crowd's sense of unity is purchased with the body of someone who didn't deserve to die, and the mechanism doesn't give a shit about ideology. It works for medieval Catholics, for Jacobin revolutionaries, for Nazi party members, for Twitter mobs. The crowd needs its victim, and the victim needs to be innocent enough that the guilt of destroying him is too heavy to carry, which is why the sacrifice must be followed by denial.

The scapegoat loop is neurology under pressure. The brain performs in-group and out-group sorting in under 200 milliseconds, before conscious perception arrives - a feature of human vision that kept small bands of primates alive on the savannah. A stranger at 40 meters could be trade or death. You didn't have time to think it through. Demagogues know this, or they feel it, which amounts to the same thing. They weaponize a perceptual shortcut human beings can't turn off, and they provide a face for a pain that has no face. The crowd does the rest.

The invention that eats its childrenThe printing press was going to democratize knowledge. And it did! But first, it launched two centuries of religious war. Johannes Gutenberg pressed his first Bible in 1455. By 1517, Luther's theses were being reproduced across Europe in weeks, and by 1618, the Thirty Years' War had begun. By its end in 1648, a third of the German-speaking population was dead. Elizabeth Eisenstein's The Printing Press as an Agent of Change in 1979 documented how the technology that was supposed to bring light to the masses also industrialized the production of astrology, witch-hunting manuals, and anti-Semitic pamphlets. The press amplified everything, including the things its advocates hoped it would abolish.

Radio was going to educate the masses. It gave Hitler a direct line to every kitchen in Germany, and Father Coughlin a direct line to thirty million American listeners in the 1930s, and Radio Rwanda the tool it needed to coordinate a genocide in 1994. Television was going to create an informed electorate - but it simultaneously created a visual electorate, which turned out to be a different thing. Marshall McLuhan saw all of this in Understanding Media in 1964 and was called a charlatan for saying so.

Social media was going to connect the world.

Well, it has, and the connection is the problem.

Every new tool that reshapes a society follows the same arc: it gets pitched as utopia, adopted before anyone understands it, panicked about ten years too late, and regulated (badly) ten years after that. By the time the culture has a theory of what the tool does, the social fabric has already been re-stitched around it, in a structural mismatch between the speed of technological change and the speed of social adaptation. The brain adopting a new tool has never been the brain that understands its second-order effects, because the lag is biological. The telegraph took 50 years to saturate the industrialized world, but the internet took 20, and the smartphone took 10.

And generative AI has taken half of that to be near-ubiquitous…

The adaptation lag stays constant, meaning each new technology is more disruptive than the last. We're adopting tools - right now - that will shape the next century without having metabolized the last century's tools; the printing press hasn't been fully understood, radio hasn't been understood, television hasn't been understood, and the side effects of social media are being "lived" through in real time by people who haven't yet admitted what it's doing to us.

The war that ends all warsHumans don't go to war despite knowing what war does, they go to war because the knowledge of what it does fades, even though it technically exists. Nobody forgot the pain of WW1. It just became less vivid…

The generation that fought swears never again, and their children believe them, but their grandchildren might not. By the fourth generation, war is an abstraction, something that happened to other people, in old photographs, with outdated weapons. William Tecumseh Sherman spent his last years giving speeches against war to audiences who listened attentively, and then sent their sons to Cuba in 1898.

The French generation that survived 1918 built the Maginot Line because it couldn't imagine living through another Somme, but their sons were overrun by a tactic that didn't exist when the walls were poured, by an enemy they had forgotten to fear. Robert McNamara, the architect of the Vietnam War, produced a documentary in 2003 called The Fog of War in which he admitted that the policies he had designed had been wrong for reasons he actually understood at the time.

The film was released during the invasion of Iraq; the lessons were on screen, broadcast to millions, but the tanks kept rolling.

You can teach someone that fire burns, but you can't make them feel the heat. A lesson that can't be felt won't prevent the behavior it describes. Wilfred Owen wrote Dulce et Decorum Est in 1917 about the sweet lie that dying for your country was noble. The poem is taught in every British secondary school; it has stopped zero wars. The interval between great-power wars in Europe from 1648 to 1945 averaged around forty years. That’s how long it takes for the generational memory of the last war to fade from the bodies of the people who vote in the next one. The post-1945 peace in Europe is the longest stretch in recorded history, which means we have a decade or two before the generation that could say "I remember" no longer exists in political life.

What happens then, is what always happens.

The moral panicWitches in Salem, 1692, where twenty people were executed on evidence so thin the colony issued an apology within a generation. Catholics in Elizabethan London. Comic books in the 1950s, after Fredric Wertham's Seduction of the Innocent triggered a US Senate investigation and forced the creation of the Comics Code Authority. Rock and roll. Dungeons & Dragons, where a generation of American parents were convinced their children were being recruited into a satanic cult by a dice game. Video nasties in 1980s Britain. The Parents Music Resource Center, chaired by Tipper Gore in 1985, running hearings against heavy metal. Rap music. Violent video games after Columbine. Social media. TikTok. Transgender rights. I feel like I’m reciting a depressing cover of We Didn’t Start the Fire…

The moral panic follows the same sequence every time. A new thing emerges that the older generation doesn't understand, and someone somewhere claims it's destroying children. The media amplifies the fear, and legislation follows. The panic burns out.

Then, twenty years later everyone agrees it was overblown.

Then, the next one begins.

The moral panic is a reaction to a loss of control; it's the terror that arises when a parent, or a culture, realizes the next generation is building a world they can't enter. The target changes every twenty years, but the terror doesn't change at all. The sociologist Stanley Cohen named the phenomenon in 1972 in Folk Devils and Moral Panics, writing about British seaside brawls between mods and rockers. The book could have been written about anything. A manufactured villain, a media cycle, a legislative response disproportionate to the threat etc, maps cleanly onto every subsequent panic, including the ones he couldn't have predicted. QAnon is a moral panic. So is the 1980s satanic-ritual-abuse craze that it grew out of, during which adults were sent to prison for crimes that forensic evidence later showed had never occurred. A panic doesn't have to be wrong to be a panic. It just has to be out of proportion, and they almost always are.

The empireWe all think our specific empire is the exception.

Rome believed it was eternal. The Chinese dynastic system believed in the Mandate of Heaven as a stable arrangement between rulers and cosmos, which is why each new dynasty claimed to have received the mandate from the last. The British believed their empire was a civilizing force that would last centuries. The Americans believe they're not an empire at all. Every imperial project follows the same arc: expansion driven by economic need, sold to the public as ideology. Overextension, and the cost of maintenance exceeding the benefit of possession. Internal rot funded by external extraction, and the slow or sudden loss of the periphery while the center insists everything is A-OK.

Edward Gibbon began publishing The Decline and Fall of the Roman Empire in 1776, the same year the American colonies declared independence from the British. Joseph Tainter's The Collapse of Complex Societies in 1988 argued that civilizations fail when the marginal return on complexity flips negative, which is a technical way of saying that empires break when each new administrative layer costs more than it adds. The Romans kept adding layers until the layers collapsed under their own weight, and eery subsequent empire has done the same.

Empire is an emergent property of human social organization at scale. Dominance hierarchies scale, as they always have, and they always produce the same endpoint: a system too large to govern, too expensive to maintain, too proud to contract voluntarily. The final stage is denial. The senators in Honorius's Rome debated traditional agricultural policy in 410 CE while the Visigoths were sacking the city; the Ottoman Porte in 1911 was still issuing decrees about the administration of the Balkans after it had lost them; the bureaucrats of the Third Reich set a record for how many memos they were writing and sending in 30 days or so befoe Hitler’s suicide; the British government after Suez spent a decade insisting that the empire was managing an orderly transition, a phrase that meant nothing because nobody was managing anything; the Soviet Politburo in 1988 was discussing the modernization of Cuban sugar exports while their own economy imploded.

When the center begins to legislate the future of a periphery it no longer controls, the collapse is already underway.

The God cycleReligions rise when the existing structures of meaning collapse. They institutionalize, they accumulate power and wealth, they become the thing they were founded to resist and they calcify. A new crisis of meaning arrives, and a new religion, or reformation, or spiritual movement rises up to replace them. The cycle runs from the Axial Age (Karl Jaspers's name for the period between 800 and 200 BCE when Confucius, the Buddha, Zoroaster, the Hebrew prophets, and the pre-Socratics emerged, each proposing a new relationship between the human and the cosmos) through the European Reformation, the First and Second Great Awakenings in America, the political religions of the 20th century, and the current explosion of secular faith substitutes - from Wellness to Bitcoin.

The true believers in CrossFit and the true believers in early 4th-century Arianism have more in common than either would like to admit. So do the adherents of long-form supplement protocols and the followers of Girolamo Savonarola, who burned the vanities in Florence in 1497 and was himself burned at the stake a year later. The impulse to purify the self through ritual deprivation is older than any of the current practitioners know - Bryan Johnson is reinventing the early Christian ascetic and selling it as biometrics. The brain requires narrative. When one narrative fails, it doesn't default to a net-zero narrative, it just grabs the nearest replacement, however ragged. This = a neurological need for meaning and coherence that no rational framework has ever been able to satisfy. The experiments of Michael Gazzaniga on split-brain patients in the 1960s showed that the left hemisphere of the human brain will manufacture an explanation for any observed behavior, including behaviors it didn't cause, rather than admit it doesn't know.

The brain won't tolerate a gap in the story, and if you don't give it a religion, it will invent one. The content of belief changes but the need for it doesn't, which is why the most confident atheists end up sounding the most religious. It’s the same apparatus, different idol.

The exhaustionDeforestation in Mesopotamia by 2000 BCE left the fields salt-crusted and the population migrating; soil depletion in Roman North Africa turned the granary of the empire into desert within three centuries; the residents of Easter Island cut down every tree on the island, lost the ability to build canoes, and were reduced to eating the dead by the time Europeans arrived in 1722; the 19th-century guano trade reshaped Pacific geopolitics around bird excrement until the deposits ran out. Whale oil, coal, petroleum, silicon, compute, housing etc. And on it goes.

Every civilization finds a resource, builds itself around that resource, burns through it, and either collapses or scrambles for the next; it’s temporal discounting, the brain's systematic undervaluation of future consequences relative to present rewards, running at civilizational scale. The Atlantic cod fishery off Newfoundland was fished every year for 500 years, and then, in the decade after 1992, it collapsed and has never returned.

The Canadian government knew the catch was unsustainable in the 1980s, but the boats went out anyway. They had mortgages to pay. Every generation knows it's borrowing from the future, but no generation stops. The cognitive machinery that would allow them to care enough doesn't exist.

The revolution that becomes the thing it replacedThe French revolutionaries executed a king and installed an emperor.

The Bolsheviks overthrew a tsar and built a new one, with secret police larger and more thorough than the Okhrana had ever been. The Iranian revolution deposed the Shah in 1979 and produced a theocracy whose morality police have arrested more women than SAVAK ever did. The anticolonial movements across Africa and Asia expelled foreign rulers and produced domestic dictators within a generation. The tech companies “disrupted” monopolies and became monopolies. Every revolution promises a break from the past and delivers a reproduction of it.

This is close to structural; the act of seizing power requires the construction of hierarchies, the concentration of authority, and the suppression of dissent, the exact things the revolution was against. The tools of liberation turn out to be the tools of control…they have to be, because they're the only tools that work.

Robespierre in 1793 believed he was defending liberty by executing 17,000 people in ten months. By the time the guillotine took him too, in July 1794, the mechanics of the Terror had built a state apparatus more centralized than anything Louis XVI had commanded. Milovan Djilas, once a senior official in Tito's Yugoslavia, wrote The New Class in 1957 from his prison cell, describing how the communist revolution had produced a bureaucratic elite with privileges indistinguishable from the aristocracy it replaced. He was right, which is why he was in prison.

The revolution is in the method; you can't win by being peaceful against a state that isn't, and you can't build by refusing to govern. But the moment the revolutionaries become the government, they become the state, and the state has structural interests. Those interests don't care who's running it. George Orwell, who had seen the Spanish Civil War up close in 1937, understood this well enough to write Animal Farm about it in 1945 and Nineteen Eighty-Four about it in 1949. Both books are taught in schools, and both are cheerfully ignored in practice. The revolutionaries who most need to read them are always the ones who believe the books can't possibly be about them…

The CassandraEvery loop has someone who sees it coming, and they're never believed.

The evidence is strong, but the warning is unwelcome, and unwelcome beats true...

Jeremiah in Jerusalem before the Babylonian conquest was ridiculed in the temple courts, and thrown into a cistern, only to be vindicated after the fact by the destruction of everything he had warned about. Cato the Elder ended every speech with Carthago delenda est until his colleagues stopped listening. Churchill in the 1930s, was frozen out of government, still warning about German rearmament to a House of Commons that preferred to discuss cricket. Eugene Stoner testified before Congress in the 1960s about the inadequacy of the M16 rifle he had designed, and the Pentagon ignored him until American soldiers in Vietnam started being found dead with their rifles in pieces in their hands. The climate scientists of the 1980s, whose testimony was televised and archived and treated, for four decades, as the background noise of cable news. The economists who called the 2008 crash, including Raghuram Rajan at Jackson Hole in 2005, and were told by Larry Summers that their analysis was "slightly Luddite." The epidemiologists who warned about pandemic preparedness in 2015, whose reports were filed, then forgotten, then pulled off the shelf in March 2020 when there was no longer time to act on them.

Accurate prediction doesn't lead to prevention.

The reason is part political: acting on a warning is expensive, and ignoring it is free, up until it isn't. But it’s equal parts cognitive: the brain treats unfamiliar threats as less real than familiar ones, regardless of probability. Shark attacks over car crashes; plane crashes over heart disease; terrorist attacks over obesity. The risks that kill us are not the risks that frighten us, because the brain evolved in an environment where the frightening things were almost always the things that killed us, and we haven't updated the pattern.

Cassandra herself, in the Iliad and the Aeneid, was cursed by Apollo to always tell the truth and never be believed. Virgil gave her the line: insani Vatis verba, “the words of a madwoman.” Even without divine curse, the outcome is the same: truth has rarely been sufficient.

The loopThe loops are caused by the species. Bad luck, bad leaders, and bad cultures show up in every story but they don't generate the pattern. The pattern is downstream of the brain that produces the stories. That's the argument.

But can the loops be broken?

So far, the answer is discouraging; but they have occasionally been lengthened. The interval between crises has been extended, the damage mitigated, and the recovery accelerated. The post-1945 international order bought 80 years of relative peace in Europe by building institutions designed to resist the strongman loop - a massive, landmark accomplishment, but an accomplishment with an expiration date, because the institutions are only as good as the generation running them, and that generation is dying off in real time.

The bubble loop has been shortened in some respects by regulation and lengthened in others by cheaper borrowing; the scapegoat loop has been softened in many places by norms of tolerance, which the current decade is stress-testing; the empire loop has been delayed for the United States by a combination of military spending and currency dominance, neither of which is permanent; the invention loop has been accelerated by every successful attempt to regulate it, because the regulation creates markets for jurisdictional arbitrage that didn't exist before.

We're very good at making the loops run faster.

We're not so good at stopping them.

The loops persist because the brain persists, and you can build a fence around a feature of human cognition. You just can't. The loops are a tendency of the species, and you can push back against a tendency within limits that go only so far.

Seeing the loop while you're inside it is a good deal harder than it sounds. Every bubble feels like a new era, and everyone saying otherwise sounds like a total bore. Every strongman feels like a savior, at least until the night he stops taking questions, and every scapegoat feels like a real enemy, because your cousin lost his job last month and somebody has to have taken it. Every war feels necessary. Every panic feels justified. Every empire feels eternal and every new God feels true. Every resource looks infinite right up until it isn’t. Every revolution feels pure for about eighteen months. Every Cassandra looks hysterical.

Every mistake of the past was made by people who were certain they weren't making it.

The move, if there is one, is the move the Trojans couldn't make, the one the Weimar voters couldn't make in 1932, the one the subprime borrowers couldn't make in 2007, the one the American cod fleet couldn't make in 1991. Treat the thing that feels obviously true with the utmost suspicion. Look for the loop in the direction you most want to walk. Ask whether the people you most agree with are the same people who would have agreed with the crowd at every previous iteration of this same mistake. It won't save you - but it might slow you down. The loop is older than any of us, and the loop has been true for 10,000 years. I think it will be true tomorrow. The only thing we get to decide is what we do with the knowledge in the interval between now and whichever loop is already closing around us.

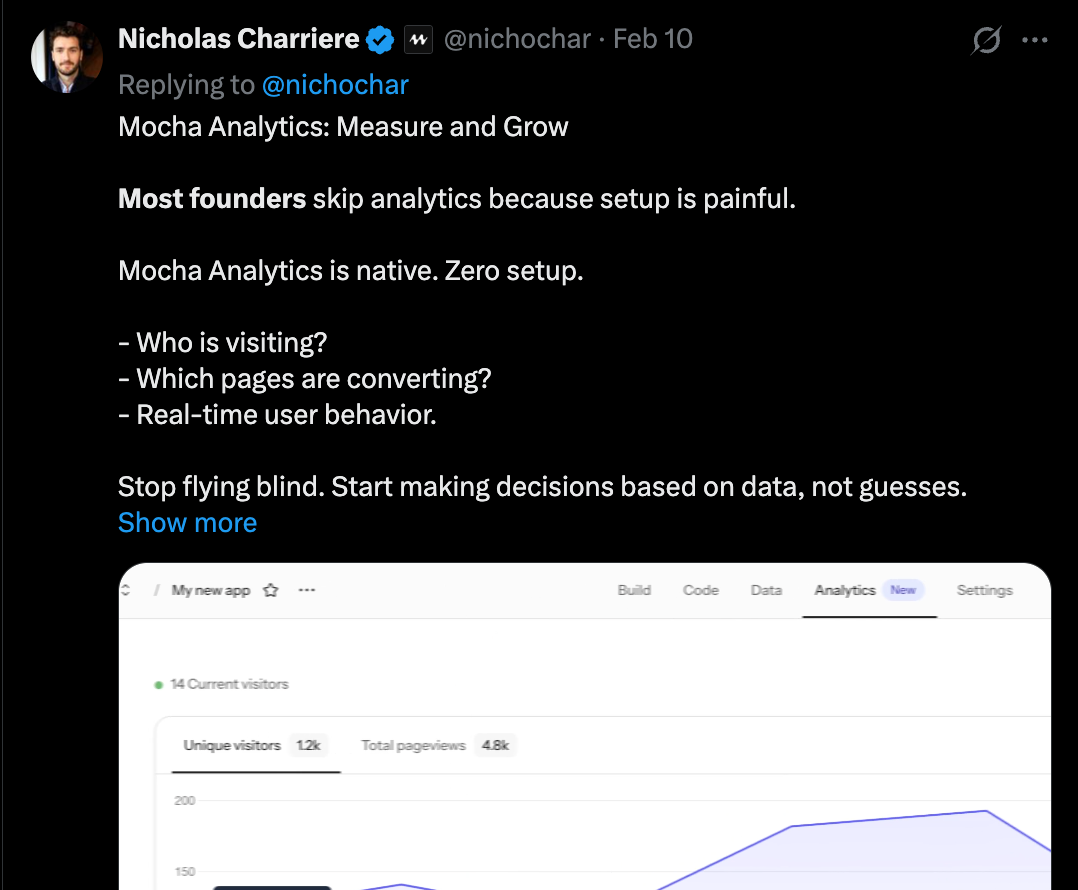

SPONSOREDWestenberg is designed, built and funded by my agency, Studio Self. Reach out and work with me:

Work with me