Show full content

If you’ve ever visited a haunted house or a paranormal hotspot, you may have experienced a weird sense of unease that you couldn’t quite explain. While it’s tempting to imagine that these feelings signal the presence of ghosts or other supernatural entities, they may actually be caused by acoustic frequencies below 20 hertz, known as infrasound, according to a study published on Monday in Frontiers in Behavioral Neuroscience.

The human ear is not tuned to pick up infrasound, yet a growing body of research has shown that exposure to these frequencies nonetheless causes negative feelings in humans and many other animals. Now, scientists have probed this mysterious link with a new experimental approach involving 36 volunteers who self-reported their moods while listening to various musical styles that sometimes included infrasound.

In addition, the volunteers provided saliva samples for measuring their cortisol levels, which provided empirical evidence that they were more stressed when exposed to infrasound. The results clearly demonstrate that “infrasound may be aversive to humans, acting as a potential environmental irritant and contributing to more negative subjective experience,” according to the study.

“A lot of the literature seemed to tackle either one side of the conversation or the other, where people are looking at surveys and doing interviews with people, or they're looking into the physiology,” said Kale Scatterty, a PhD student at the Neuroscience and Mental Health Institute at the University of Alberta who led the study, in a call with 404 Media. “We wanted to use this as a first step in combining those approaches to get a whole picture of exactly what was happening with this effect.”

“It was surprising and exciting to see a significant difference in cortisol when the infrasound was turned on,” added Trevor Hamilton, a professor of psychology at MacEwan University who co-authored the study, in the same call.

For decades, scientists have linked infrasound to negative effects on humans and many other animals, though it is still not known how humans pick up on these sounds, or why we might have evolved an aversion to this frequency range. Given that natural sources of infrasound include dangerous events like volcanic eruptions, landslides, avalanches, intense storms, or stampeding animals, researchers speculate that humans and other species may have learned to interpret infrasound as a warning sign for incoming disaster.

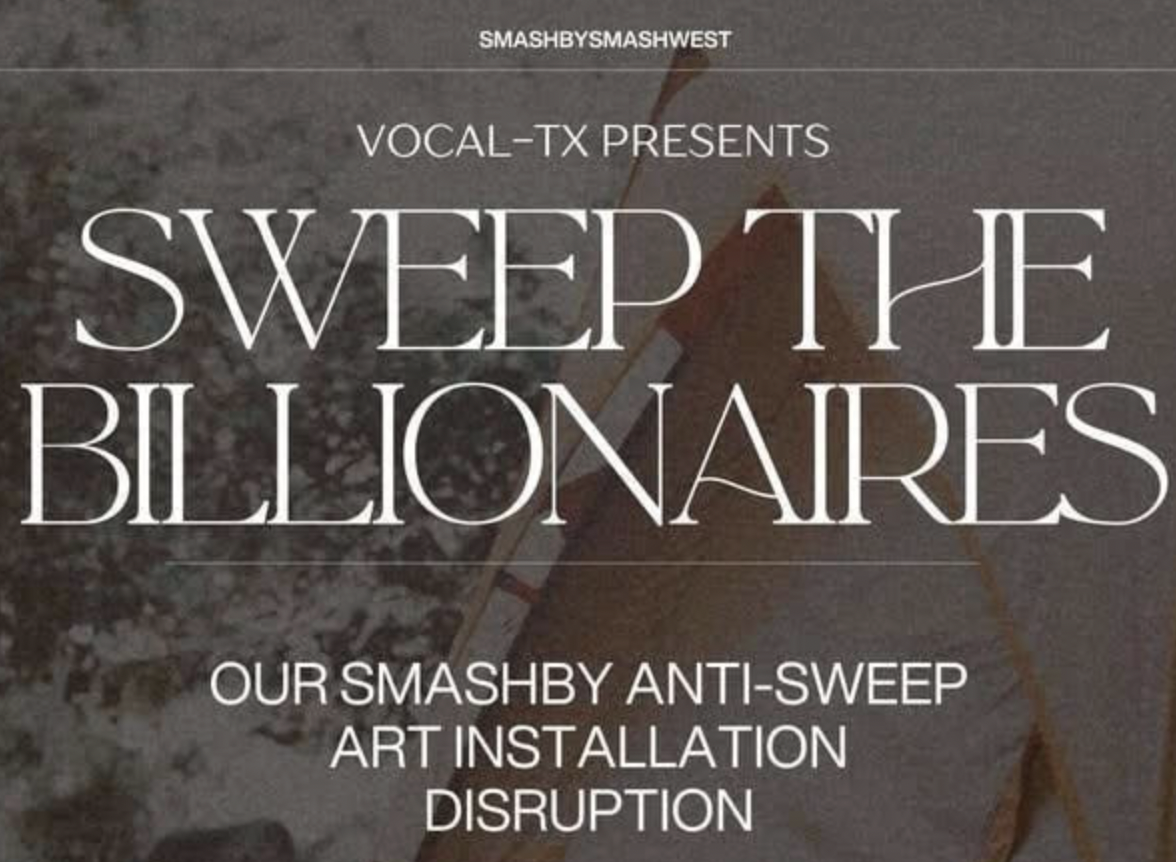

But, you may be asking yourself, where do the ghosts come in? Infrasound is also produced by a wide range of human-caused noise pollution, such as industrial machinery, wind farms, air conditioning units, busy roads and railways, or military activity in war zones. For this reason, many scientists have wondered if locations that are considered haunted or cursed in some way may sometimes be polluted by infrasound.

Rodney Schmaltz, a co-author of the study and a professor of psychology at MacEwan University, even organizes classes around taking his students to paranormal hotspots, such as the haunted house Deadmonton, to search for scientifically-grounded explanations of their spooky allure. These fun field experiments revealed that playing infrasound at Deadmonton motivates visitors to move more rapidly through the house.

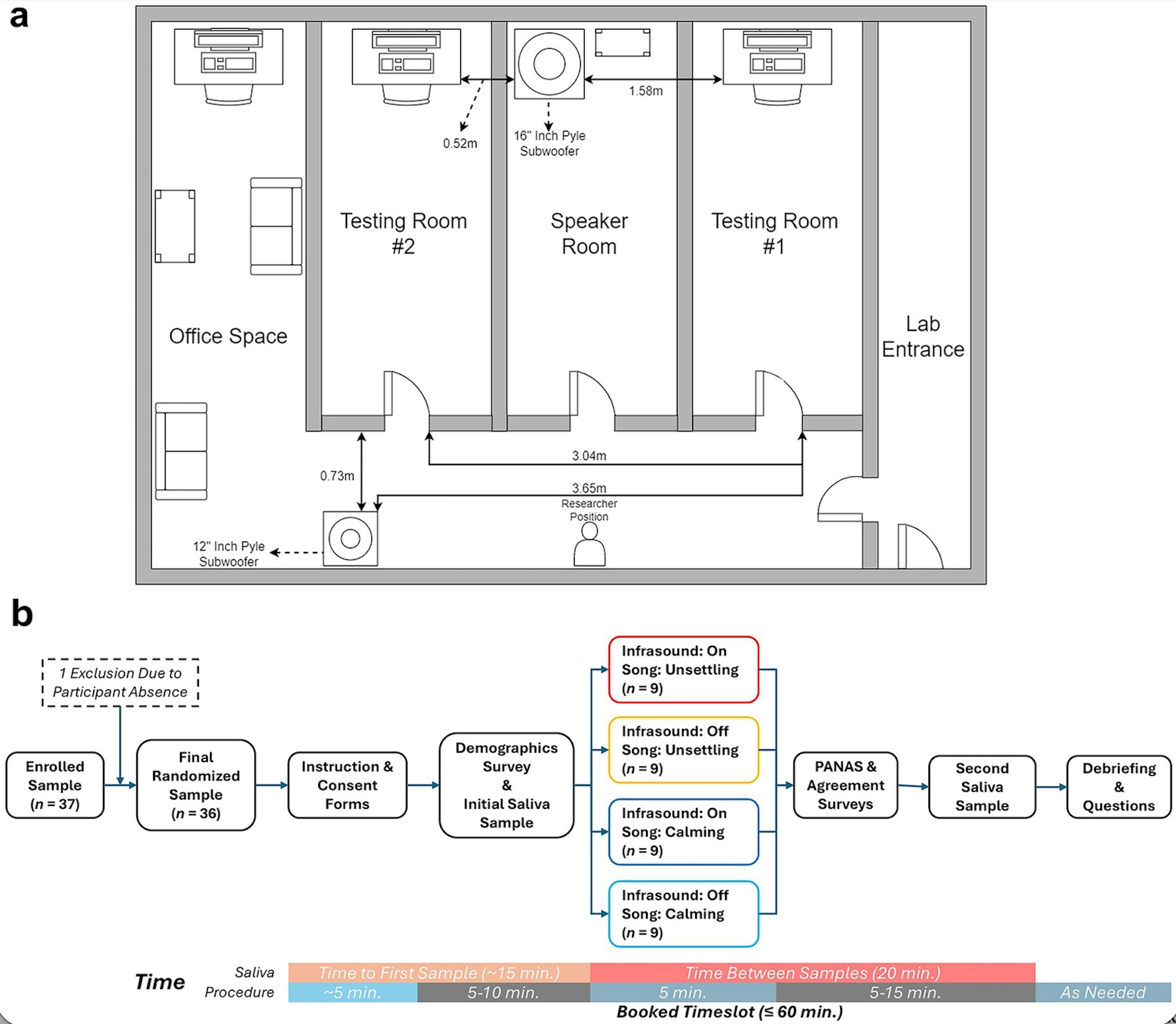

In the new study, the interdisciplinary team combined their expertise by recruiting 36 undergraduate psychology students at MacEwan University (27 women and nine men). Each participant sat in a room alone while calming or unsettling music was played, and gave saliva samples before and after their session. Half of the participants were exposed to infrasound at 18 hertz while listening to both types of music. The participants were asked to report their feelings, their emotional rating of the music, and whether they thought infrasound had been played in their session.

Tip JarThe participants couldn’t consciously tell whether infrasound was played, but the elevated cortisol levels in the exposed group suggests that some part of their brain picked up on the frequencies, regardless of the type of music that accompanied it. Unlike many past studies, this research didn’t link infrasound exposure to heightened anxiety, though the exposed group reported more irritability, less interest in the music, and a sense that the music was sadder with infrasound.

The sample size of 36 is relatively small due to budget constraints—salivary cortisol tests are not cheap—but Scatterty’s team hopes their study offers a roadmap toward similar experiments that aim to pinpoint the mechanisms that cause infrasound to raise our hackles.

“We get very excited when we find something really positive like this, but for every single question we answer, we tend to have five more questions come up,” Scatterty said. “It's really hard to give any definitive answers. But for those who have curious minds, it's exciting to see where this kind of work could go. People who are interested in haunted houses and the paranormal might be having something to chew into here. People who are looking at the ecological side of things might interpret it as a noise pollutant for either humans or animals in nature.”

“It's really exciting for the potential it offers for future research,” he concluded.

404 MediaSamantha Cole

404 MediaSamantha Cole